- Generative AI accelerates content workflows by reducing time and cost across ideation, drafting, adaptation, localization, and asset repurposing.

- Effective use of generative AI requires structured workflows, high-quality source material, human oversight, and clear governance to maintain quality and reduce risk.

- Competitive advantage in generative AI-driven content creation depends on expertise, editorial judgment, and the ability to orchestrate coherent multimodal content systems.

I’ve worked with enough content teams to see that conversations about generative AI often swing between extremes. On one side, it’s hyped as a creative revolution that will replace entire functions. On the other, it’s dismissed as a stochastic toy producing bland copy and derivative images. Neither view helps serious practitioners.

Generative AI is already changing how professional teams develop, adapt, and distribute content across editorial workflows, campaigns, design pipelines, audio, video, localization, and internal knowledge work. These changes are operational, not theoretical, and also raise strategic questions around quality control, intellectual property, governance, and brand safety.

This guide examines the technical logic, category landscape, real use cases, implementation patterns, business implications, and legal and ethical considerations. It offers a detailed, practitioner-focused perspective rather than a beginner’s overview.

Why generative AI matters specifically for content creation

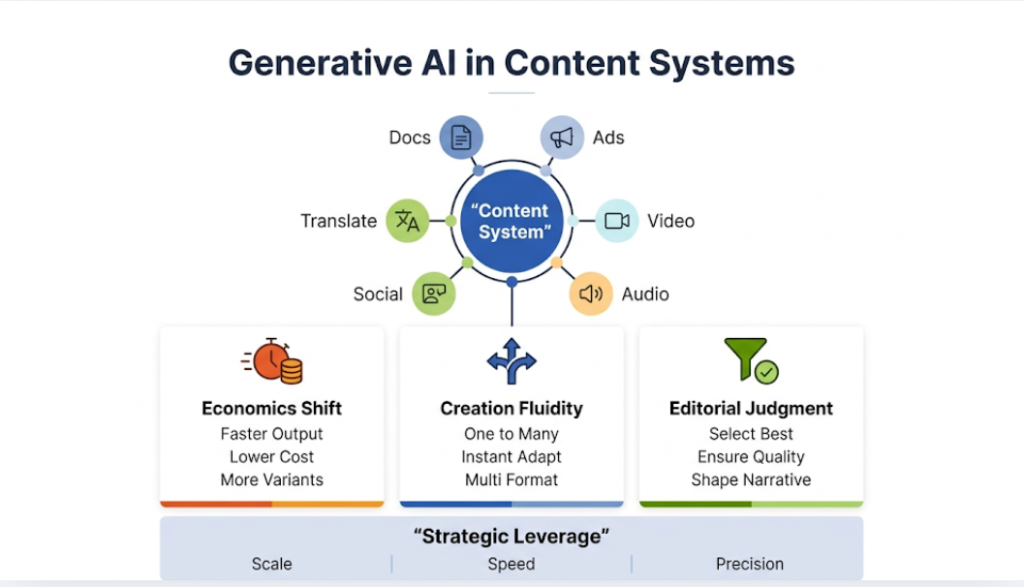

Content creation has always sat at the intersection of labor, tools, timing, and distribution economics. What makes generative AI so disruptive is not simply that it can create content. Software has helped create content for decades. What makes this different is that generative systems compress the cost and time required for ideation, drafting, variation, transformation, adaptation, and scaling.

A professional content team rarely creates only one asset. It creates a content system. A campaign might involve:

- Strategy documents

- Creative concepts

- Ad variants

- Landing pages

- Blog posts

- Scripts

- Voiceovers

- Subtitles

- Image sets

- Short videos

- Translations

- Follow-up messaging

Generative AI touches nearly every layer of that system.

That matters for three reasons.

It changes the economics of production

The first major shift is economic. When a team can generate ten concept directions in the time it once took to produce two, the structure of creative work changes. When a video localization workflow no longer requires a new shoot, new voice talent, and a full edit cycle for every market, the economics of international distribution change. When a writer can use a model to generate angle options, draft outlines, summarize source material, and propose alternative headlines, the throughput equation changes.

This does not automatically mean costs collapse or quality rises. It means the production frontier moves. Teams can reallocate time from repetitive drafting to judgment, positioning, quality control, narrative development, and strategic orchestration.

It blurs the boundary between creation and adaptation

Traditional content production often treated creation and adaptation as separate phases. Someone wrote the article. Then someone else repurposed it into emails, ads, social posts, summaries, transcripts, localized versions, and sales collateral.

Generative AI compresses those phases into a more fluid system. A base asset can become many derivative assets almost instantly. For example, a long report can become:

- Executive summaries

- Slide content

- Short-form posts

- Video scripts

- Audio narration

- Chatbot knowledge

- Region-specific variants

This introduces enormous leverage, but it also creates a new problem: if the source material is weak, the weakness propagates faster and farther.

It raises the premium on editorial judgment

The more content a system can generate, the more important selection becomes. That is one of the most misunderstood dynamics in this space. Generative AI does not reduce the value of taste, judgment, narrative intelligence, and domain expertise. It increases it.

When anyone can generate first drafts, mediocre content becomes abundant. The differentiator shifts toward insight, curation, verification, strategic framing, and the ability to shape outputs into something coherent and useful. Professionals who understand this become more valuable, not less.

How to read the market without getting misled

Before I get into definitions and mechanics, I want to make one thing clear. The generative AI market is noisy by design, especially as marketers sort through a rapidly expanding landscape of AI tools for marketing and content operations. Vendors describe every workflow improvement as intelligence. Agencies rebrand templated automation as innovation. Product demos hide the failure cases. Social feeds amplify outputs that are visually impressive or provocative, not necessarily commercially reliable.

That means professionals need better filters.

Do not confuse novelty with production readiness

A model that can create an eye-catching one-off image is not necessarily suitable for a brand system. A video model that can generate five seconds of cinematic motion is not automatically useful for a marketing organization that needs:

- Version control

- Approval flows

- Multilingual support

- Legal review

- Predictable outputs

Similarly, a chatbot that sounds fluent is not necessarily accurate enough for regulated content.

The question is never just whether a model can generate something. The real question is whether it can generate useful content reliably inside a workflow that professionals can govern.

Do not confuse speed with value

Speed matters. It just does not matter by itself. A model that drafts 1,000 words in ten seconds creates no value if the output is generic, inaccurate, off-brand, or legally risky. Real value comes from a combination of speed, relevance, quality, controllability, and integration.

Do not confuse broad capability with domain suitability

Many general models appear impressive because they perform reasonably well across many tasks. But professional use often depends on narrower considerations, including:

- Compliance

- Data handling

- Localization depth

- Editorial controllability

- Asset management

- Collaboration features

- Licensing terms

The best general model is not automatically the best production tool for a specific client or sector.

Those filters matter throughout the rest of this article.

What Generative AI Is and What It Is Not

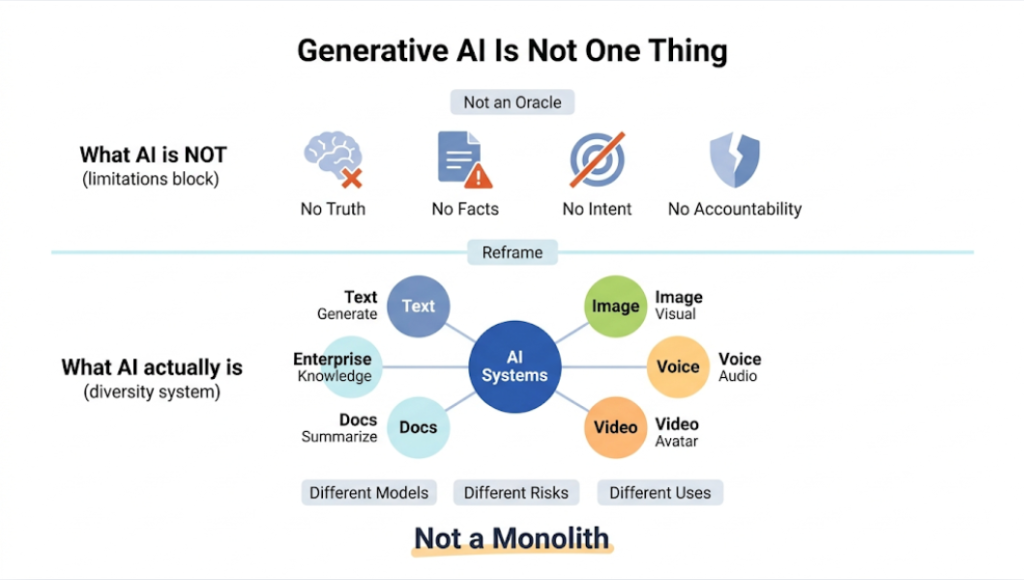

Generative AI refers to machine learning systems that produce new outputs rather than merely classifying, ranking, or predicting labels from existing data. Those outputs can take the form of text, images, audio, video, code, synthetic data, or multimodal combinations.

At a technical level, generative models learn statistical structures from massive datasets and then use those learned patterns to generate plausible new examples. In simpler terms, they model the structure of content well enough to create more content that resembles what they have seen.

That explanation is correct, but it is still too abstract for professional use. What matters in practice is that generative AI systems operate as probabilistic synthesis engines. They do not:

- Retrieve creativity from a vault

- Think the way humans think

Instead, they:

- Infer patterns, relationships, and structures from training data

- Interpret interaction context and prompts

- Predict likely continuations

- Produce outputs that satisfy constraints as effectively as possible

This is what allows them to generate content that feels coherent, adaptive, and context-aware rather than static or rule-bound.

What makes generative AI different from earlier automation

Earlier content automation depended heavily on templates, rules, or narrow prediction systems. For example:

- A rules-based copy tool might insert product names and offers into ad templates

- A recommendation engine might rank headlines

- A grammar checker might correct syntax

- A simple natural language generator might turn structured data into repetitive text summaries

Generative AI differs because it can synthesize across many dimensions at once. It can absorb style cues, semantic intent, contextual instructions, formatting constraints, audience signals, and task objectives, then generate outputs that feel flexible rather than rigidly templated.

This is why the technology feels discontinuous to many teams. It is not just automating repetitive tasks. It is entering domains that people long assumed required human-level language fluency, visual imagination, or expressive variation.

What generative AI is not

It is not an oracle. It does not guarantee the truth. It does not understand facts in the way domain experts do. It does not own intent, accountability, or editorial responsibility.

It is also not one single, unified thing. The phrase generative AI covers a heterogeneous set of model classes, architectures, data strategies, interfaces, and product experiences.

- Text generation and image generation differ materially

- Voice cloning and document summarization raise different risks

- An enterprise knowledge assistant is not interchangeable with an avatar video platform

- Different models vary in accuracy, reliability, and domain specialization

- Outputs depend heavily on prompts, context, and constraints

- Some systems are optimized for creativity, while others focus on precision or structure

Professionals make bad decisions when they treat generative AI as a monolith.

How Generative AI Works Across Modalities

To use these systems intelligently, professionals need at least a working mental model of how the core modalities function. You do not need to become a machine learning engineer. But you do need to understand the practical implications of how these systems generate outputs.

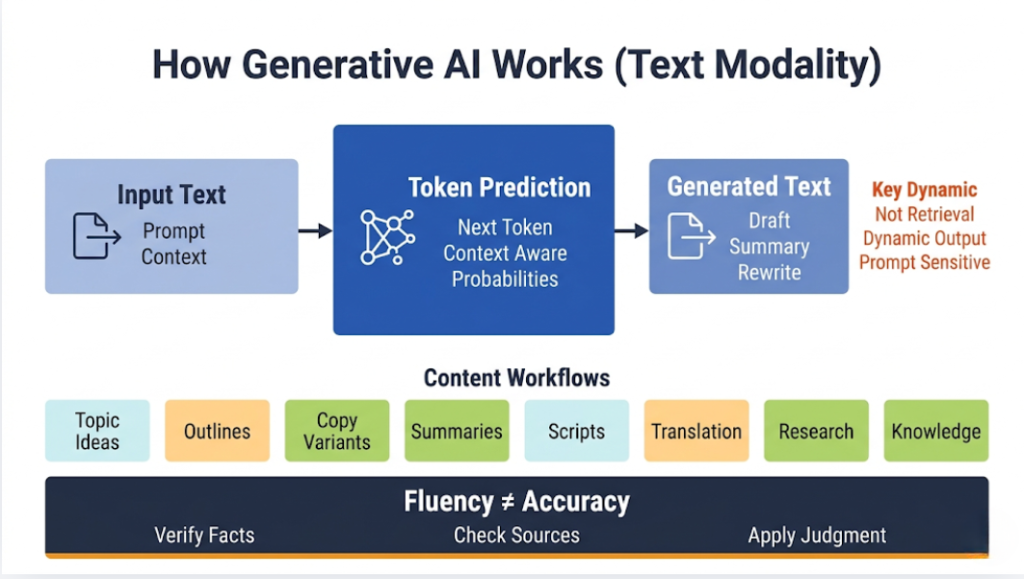

Text generation and large language models

The most commercially visible form of generative AI is text generation through large language models, or LLMs. These models train on enormous corpora of text and learn to predict the next token in a sequence. A token can be a word, part of a word, punctuation, or another text fragment.

That sounds almost trivial until you understand the consequences. A system trained at sufficient scale learns syntax, semantics, rhetorical structure, genre conventions, discourse patterns, and enough world knowledge to produce writing that appears coherent across many tasks.

- It can draft articles

- Summarize reports

- Rewrite copy

- Extract structured data

- Answer questions

- Translate content

- Simulate dialogue

Why token prediction becomes useful writing

A common mistake is to assume that “predicting the next word” should lead only to trivial autocomplete. In reality, when a model learns from enough text and enough context, token prediction becomes a highly compressed way of modeling language structure itself.

The model is not retrieving a single memorized answer from a database. It is continually generating the next most plausible continuation given the prompt, prior tokens, hidden internal representations, and system instructions. That is why the same prompt can yield different outputs and why subtle wording changes in prompts can materially affect results.

Why text models are so powerful for content workflows

Text sits upstream from many other content functions. Strategy, planning, briefs, scripts, outlines, emails, landing pages, reports, messaging architecture, metadata, prompts, and editorial guidance all depend on language. That means LLMs often become orchestration tools, not just writing tools.

In practice, teams use them to:

- generate topic angles

- structure article outlines

- adapt tone for different audiences

- summarize source material

- create multiple variants of performance copy

- support research synthesis

- convert long-form content into short-form outputs

- prepare scripts for video and audio production

- support translation and localization workflows

- structure internal knowledge for reuse

The danger, of course, is that these systems can produce confident nonsense. Fluency often exceeds factual reliability. That forces professionals to distinguish between linguistic quality and epistemic quality. The first is easy to generate. The second still requires verification.

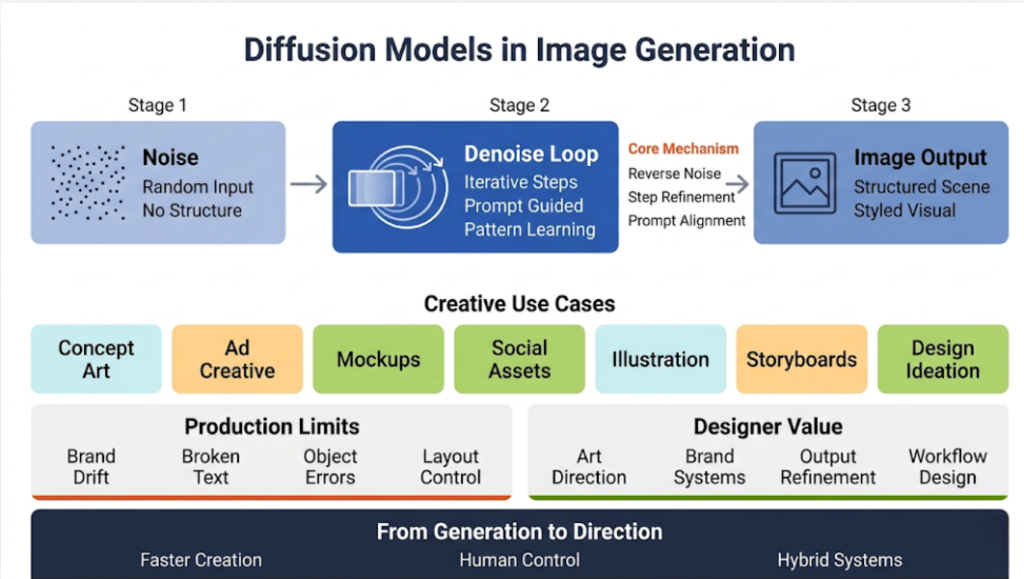

Image generation through diffusion and related models

Image generation changed the market because it gave non-specialists access to rapid visual synthesis. Instead of needing design software fluency, a user could describe a scene in natural language and receive a polished visual composition in seconds.

Most of the major modern image systems rely on diffusion-based approaches. Without getting needlessly technical, the core idea works like this: the model learns how to reverse a process that gradually corrupts images into noise. Once trained, it can begin from noise and iteratively denoise that noise into an image that aligns with a text prompt or another conditioning signal.

Why diffusion models matter commercially

Diffusion models made image generation more flexible, more controllable, and generally more powerful than many earlier generative methods in mainstream use. They handle style transfer, composition, atmosphere, lighting, and object relationships with surprising sophistication. That creates practical value for:

- concept art

- ad creative

- product mockups

- social assets

- brand experimentation

- editorial illustration

- design ideation

- storyboarding

But it also creates familiar production challenges. Brand consistency remains difficult. Typography inside generated images often breaks. Object persistence can fail. Fine-grained control over layout, logo treatment, and exact product fidelity often requires either iterative prompting, inpainting, editing pipelines, or hybrid workflows that combine AI generation with human design refinement.

The strategic implication for professionals

Image models do not eliminate designers. They change where designers add the most value. Instead of spending most of their time generating first-pass compositions from scratch, designers can spend more time directing visual systems, enforcing brand logic, refining selections, correcting flaws, integrating outputs into campaigns, and building reusable workflows around AI-assisted ideation.

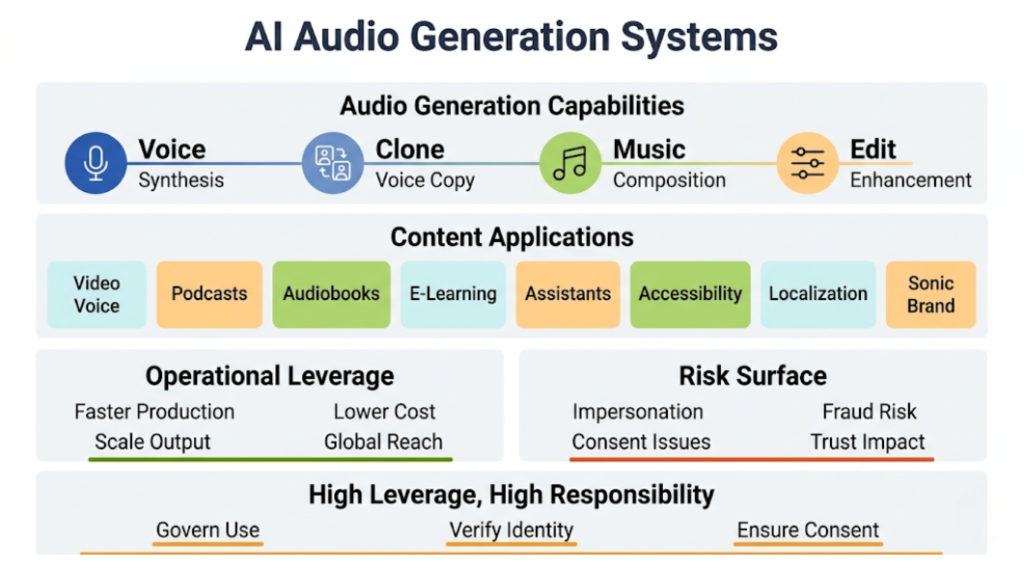

Audio generation, speech synthesis, and music creation

Audio has become one of the most interesting generative categories because it combines expressive power with operational efficiency. Voice synthesis tools can now produce highly realistic narration from text. Some systems can clone voices with very small sample inputs. Music systems can generate background scores, genre variations, or mood-based compositions. Audio editing tools can also remove filler words, clean sound, separate stems, or reconstruct missing speech.

Why audio matters more than many teams realize

A lot of organizations still think about content in visual and textual terms. That is a mistake. Audio plays a central role in:

- video narration

- podcasts

- audiobooks

- e-learning

- voice assistants

- accessibility workflows

- multilingual localization

- branded sonic identity

Generative audio collapses production steps that once required studio time, voice actors, multiple revisions, and expensive localization pipelines. That can create major operational leverage, especially for training content, product explainers, support content, and international distribution.

The risk profile is unusually high

At the same time, synthetic voice technology introduces a sharp risk surface. Voice cloning can support legitimate creative and commercial applications, but it can also enable impersonation, fraud, reputational harm, and consent violations. Professionals cannot treat audio generation as just another convenience feature. Governance, authorization, and disclosure practices matter here far more than many teams assume.

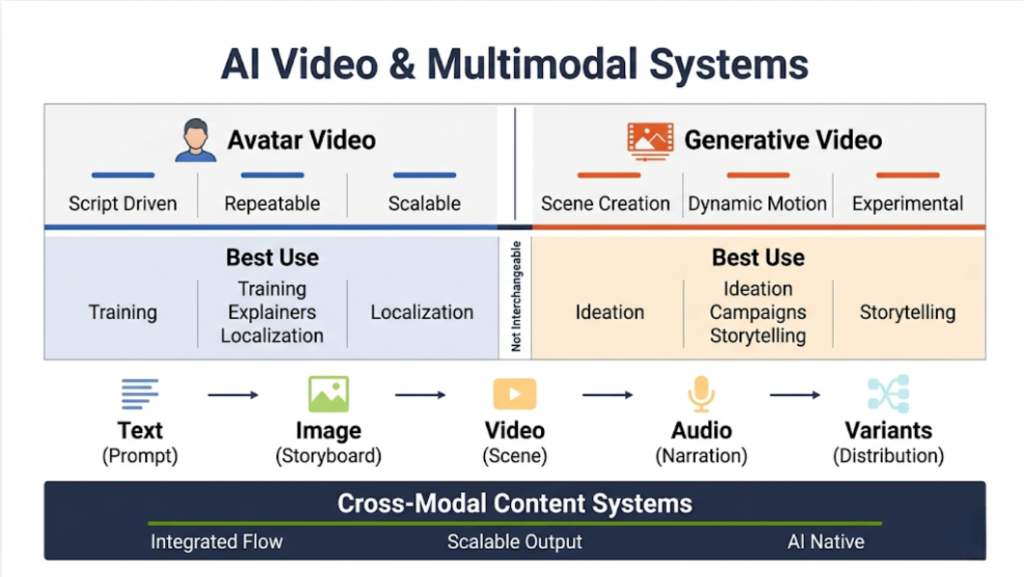

Video generation and multimodal synthesis

Video generation sits at the frontier because video combines nearly every content challenge at once: narrative coherence, scene consistency, motion realism, visual composition, pacing, sound, and often language.

Some platforms focus on avatar-based video. These systems take a script and render a virtual presenter delivering that script in a realistic way. That use case has already found strong adoption in training, onboarding, internal communication, explainers, and multilingual communication.

Other systems aim for open-ended text-to-video generation. These models generate moving scenes from natural language prompts. They have improved quickly, but they still face practical limitations around duration, scene consistency, controllability, identity persistence, and predictable brand use.

Why avatar video and generative cinematic video should not be lumped together

Professionals often talk about AI video as one category, but commercially they serve different needs.

Avatar video excels when the goal is repeatable, scalable communication. If you need a presenter to deliver scripts in multiple languages across many variants, avatar systems can be powerful and cost-effective.

Open-ended generative video is better suited to ideation, experimental campaigns, concept development, and certain short-form visual storytelling use cases. It is impressive, but in many professional contexts it is not yet as reliable as the hype suggests.

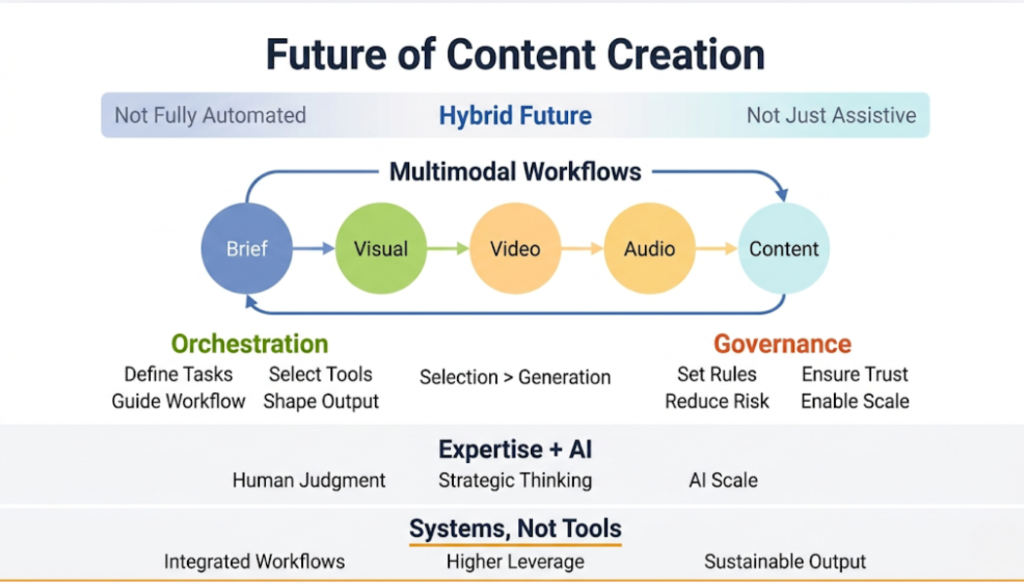

The deeper point about multimodality

As models become more multimodal, the distinctions between text, image, audio, and video workflows start to blur. A text prompt may become a storyboard, then a video, then a localized narrated version, then a short-form campaign derivative. The future of content creation will depend less on isolated tools and more on cross-modal content systems, especially as brands adapt to generative engine optimization and AI-native discovery environments.

The Strategic Foundations of Generative AI in Content Operations

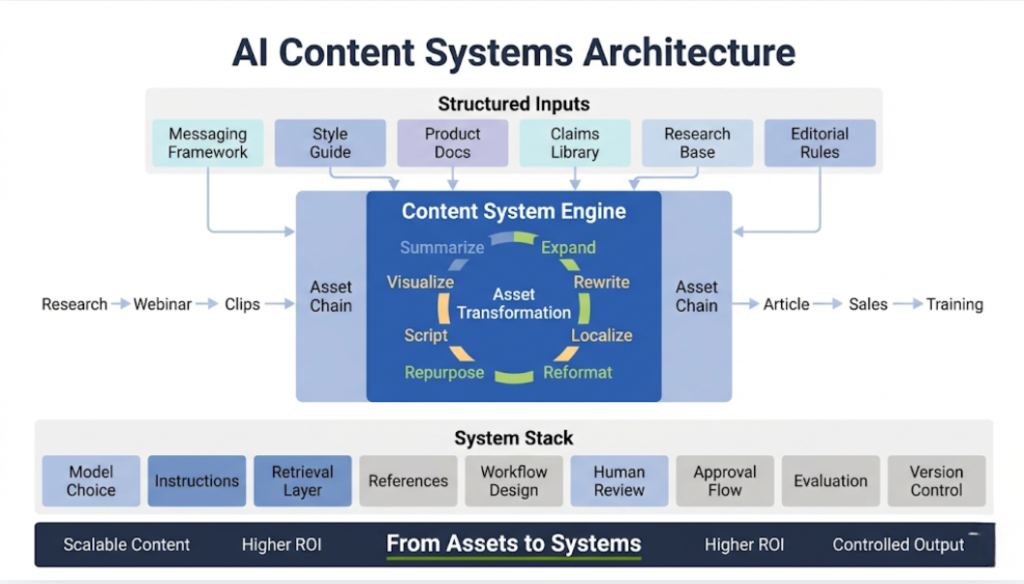

Before moving into sector use cases, I want to address the operational principle that underlies everything else: generative AI is most valuable when content is treated as a system rather than a sequence of isolated assets.

That means professionals should think in terms of content architecture.

Asset chains rather than single outputs

Every serious content organization creates chains of assets. A research paper becomes a webinar. The webinar becomes clips. The clips become social posts. The transcript becomes an article. The article becomes sales collateral. The deck becomes internal training. The campaign becomes regional variants.

Generative AI is especially powerful in these transformation layers. It can:

- summarize

- expand

- rewrite

- localize

- reformat

- retarget

- repurpose

- script

- caption

- narrate

- visualize

This is where a lot of ROI comes from. Not from pressing a button and asking AI to “write a blog post,” but from using AI to coordinate and accelerate asset transformation across the full content lifecycle.

Why structured source material matters

The best generative workflows usually start with strong source material. High-performing teams often pair AI systems with:

- messaging frameworks

- style guides

- product documentation

- approved claims libraries

- research repositories

- editorial standards

- visual systems

- compliance checklists

- localization guidance

When those foundations are absent, AI outputs become more generic and more risky. When those foundations are present, AI becomes much more usable because it can operate inside a defined strategic envelope.

Why prompting is not enough

The market overemphasized prompting for too long. Prompting matters, but it is not the whole discipline. In professional settings, quality depends on a wider stack:

- model selection

- system instructions

- retrieval layers

- reference materials

- workflow design

- human review

- approval governance

- output evaluation

- version control

A talented prompt writer can improve results. A well-architected content operation can change the economics of an entire function.

Core Use Cases Across Industries

Now I want to move from mechanics to application. The value of generative AI becomes much clearer when we examine how different sectors actually use it in production.

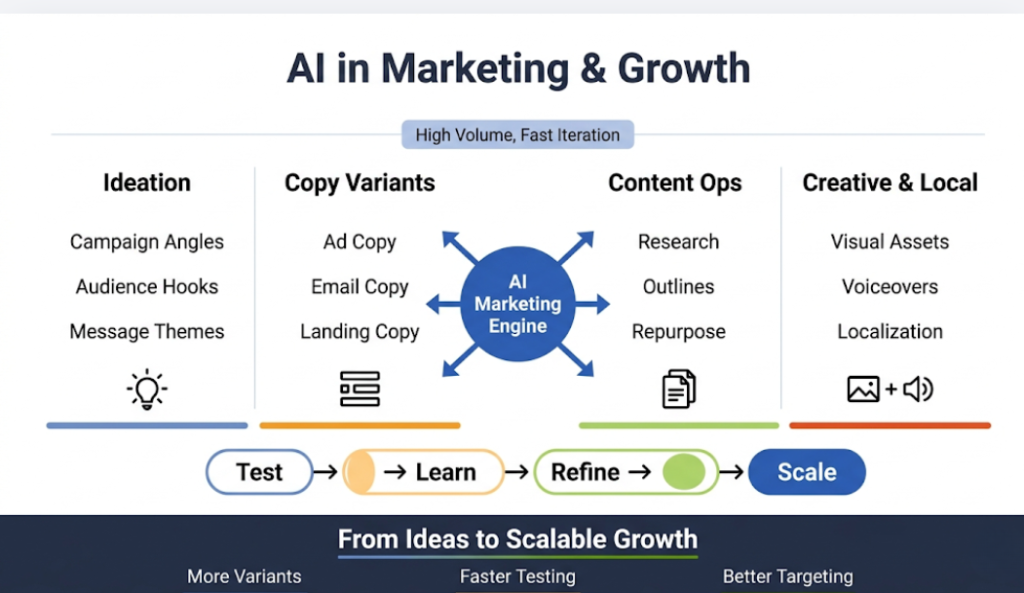

Marketing and growth

Marketing remains one of the clearest fit areas for generative AI because the function already depends on high-volume experimentation, message variation, audience segmentation, channel adaptation, and rapid turnaround. According to Salesforce’s State of Marketing Report (2025), 63% of marketers are already using generative AI tools in their workflows, which reflects how quickly this capability has moved from experimentation to operational reality. In many organizations, AI entered marketing before it entered more conservative functions simply because the workflow matched the technology’s strengths.

Campaign ideation and concept development

At the earliest stages of a campaign, generative AI can help teams explore directions faster. Strategists use models to propose campaign angles, audience hooks, headline territories, messaging variants, thematic frames, content pillars, and creative prompts. Used correctly, this does not replace strategy. It expands the option space and accelerates early-stage exploration.

The danger is obvious. If teams outsource strategy to the model, they often get polished genericity. The value appears when experienced marketers use AI to widen the search space, pressure-test ideas, and accelerate synthesis.

Performance copy and variant generation

This is one of the strongest practical use cases. Paid social, display, email, landing pages, and product marketing all benefit from systematic variation. AI can generate large sets of copy variants tailored to different audience segments, funnel stages, offers, and platforms.

That does not mean every variant will be good. It means the cost of generating a large candidate set falls dramatically. Teams can then test, refine, and select with much greater efficiency.

Content marketing and editorial support

For content marketing teams, AI helps with topic research, outline generation, summarization, angle development, draft expansion, title testing, metadata, and repurposing. The strongest teams do not simply publish AI drafts. They use AI to accelerate the path from expertise to publishable content.

In B2B contexts especially, expertise still drives performance. AI helps package and distribute that expertise more efficiently, but it does not create a durable moat by itself.

Creative production and localization

Image, audio, and video AI have extended marketing beyond copy. Teams now generate campaign visuals, storyboard concepts, product backdrops, localized voiceovers, synthetic presenters, motion variations, and market-specific derivatives at speeds that were previously unrealistic.

That is especially important for global brands. Localization used to impose heavy production friction. AI reduces that friction, although it also increases the need for local cultural review.

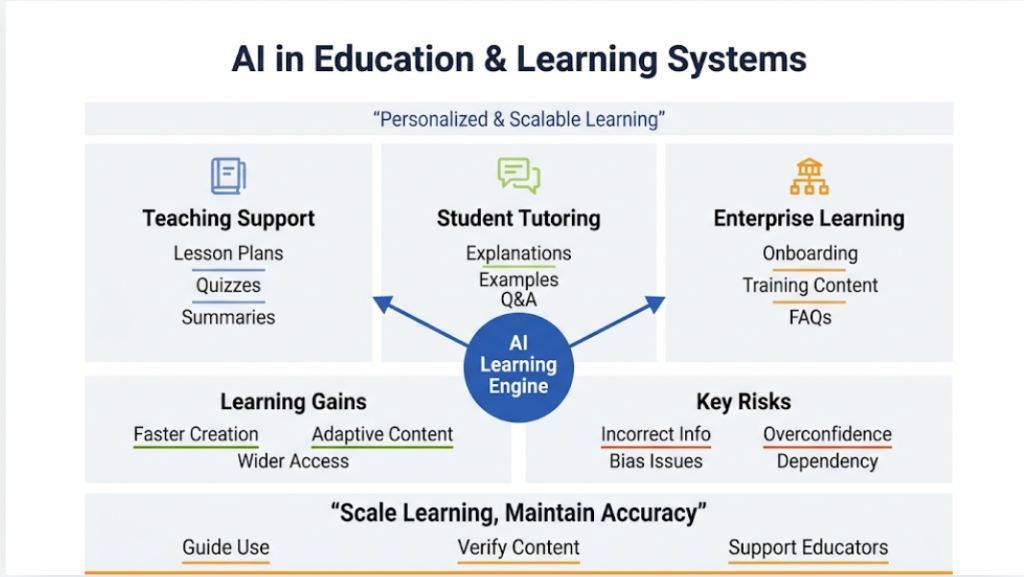

Education and learning content

Education is another natural fit because learning content often combines explanation, adaptation, repetition, and personalization. Generative AI helps convert expertise into teaching materials more efficiently.

Teaching support and curriculum development

Educators and instructional designers use AI to draft lesson plans, quizzes, reading summaries, discussion prompts, practice questions, and differentiated versions of the same content. It can help produce materials for multiple reading levels or language backgrounds. That has practical value for overloaded educators and distributed training teams.

Student support and tutoring

AI tutoring and conversational guidance may become one of the most significant long-term use cases. A model can explain concepts, generate examples, answer follow-up questions, and adapt its explanations to the learner’s level. That potential is real. So are the risks. Incorrect explanations, overconfidence, bias, and dependency issues remain major concerns.

Institutional and corporate learning

Outside formal education, enterprises use generative AI extensively in training. Instructional content, onboarding materials, product explainers, compliance modules, and internal FAQ systems all benefit from AI-assisted drafting and multimedia adaptation. This area often sees clearer ROI than public-facing marketing because the workflow is more structured and the success criteria are easier to define.

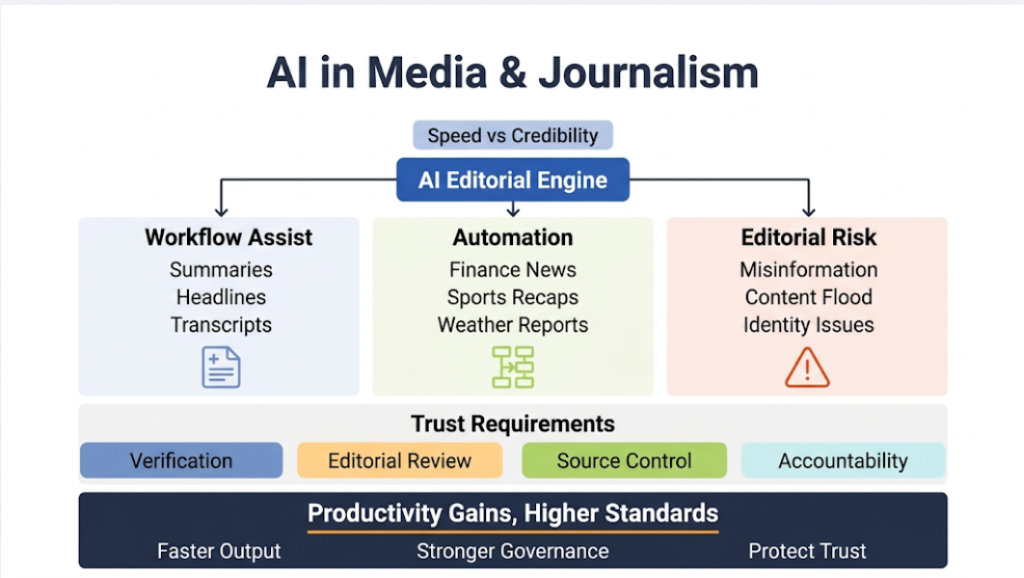

Media, publishing, and journalism

Media organizations face a more complex relationship with generative AI. They can use it productively, but they also face direct threats from it.

Workflow acceleration in editorial environments

Journalists and editorial teams use AI to summarize documents, surface key themes, generate draft headlines, extract structured information, assist with transcripts, and convert long interviews into usable notes. These are meaningful productivity gains.

Automated content categories

Some categories of journalism have long been partially automated, particularly finance, sports recaps, weather, and structured event summaries. Generative AI expands the sophistication of this automation. It can make templated outputs sound more natural and adapt them for different channels.

The trust problem

The media cannot treat generative AI like a neutral productivity layer because the technology also destabilizes trust. It contributes to misinformation volume, content flooding, synthetic identity problems, and search ecosystem distortion. Newsrooms that use AI need stricter standards than many other sectors because credibility is their product.

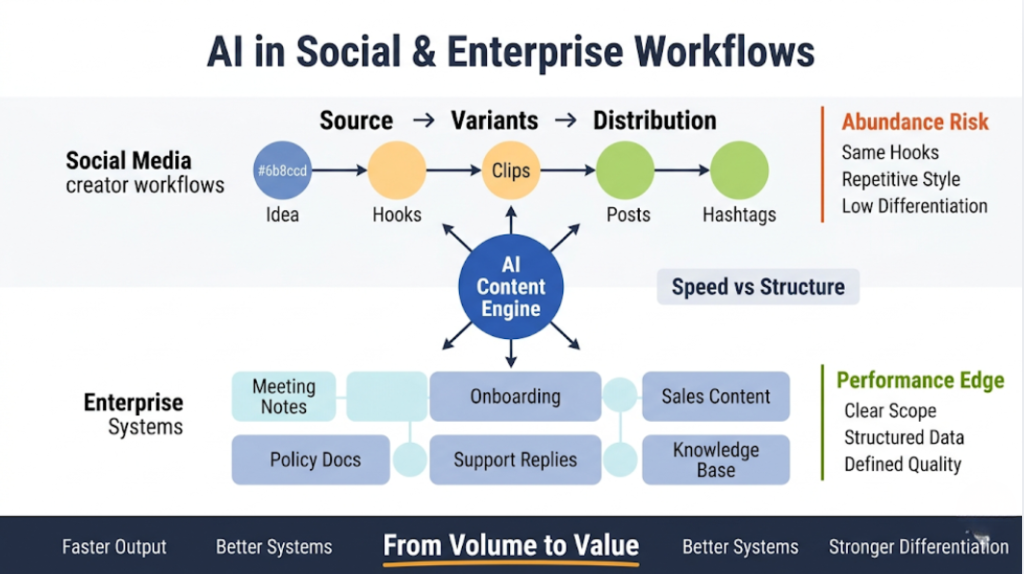

Social media and creator workflows

Generative AI compresses the gap between idea and distribution, which makes it deeply attractive in social environments.

Short-form content systems

Creators and social teams use AI to turn one source asset into many derivatives: captions, hooks, post variants, visual prompts, script ideas, clip descriptions, thumbnail text, hashtags, summaries, and audience-specific rewrites. This dramatically increases publishing velocity.

The abundance problem

But abundance creates sameness. Many AI-assisted social outputs converge toward the same hooks, the same rhythms, the same visual clichés, and the same pseudo-insight. That means professional creators need a stronger editorial personality and sharper strategic differentiation, not less.

Business and enterprise communication

A great deal of generative AI value sits outside public-facing content.

Internal communication and documentation

Teams use AI to generate meeting summaries, policy drafts, onboarding materials, internal announcements, support documentation, executive briefings, and knowledge-base content. These are not glamorous use cases, but they often generate very clear productivity gains.

Sales enablement and customer support content

Sales teams use AI for proposals, follow-up emails, summaries, objection handling, call notes, and account-specific messaging support. Support teams use AI to draft help center content, response macros, chatbot flows, and triage summaries.

Why enterprise use often outperforms public use

The reason is simple. Enterprise content environments usually involve narrower domains, clearer constraints, and more structured source materials. Models tend to perform better when the task space is bounded and the organization can define what good looks like.

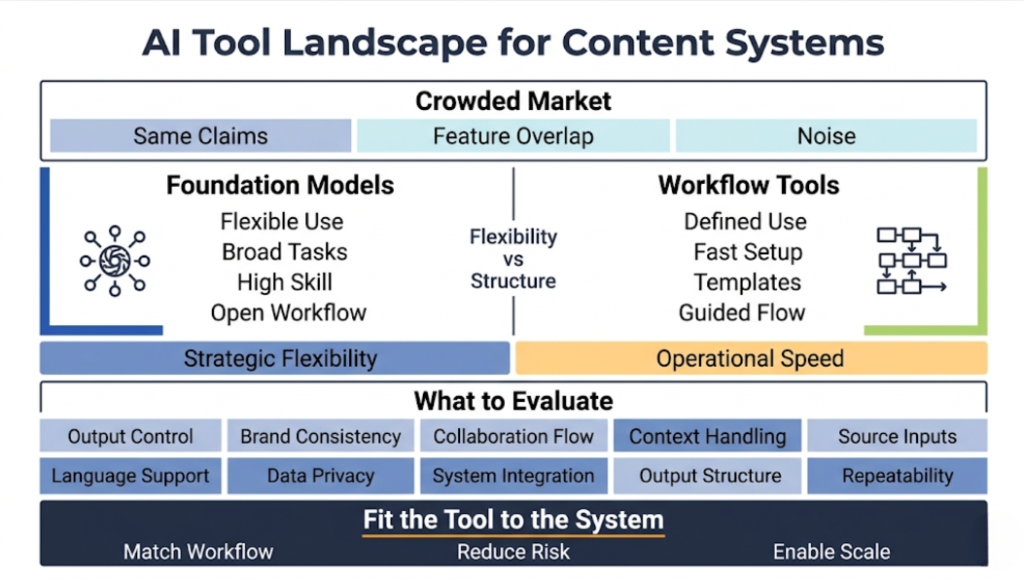

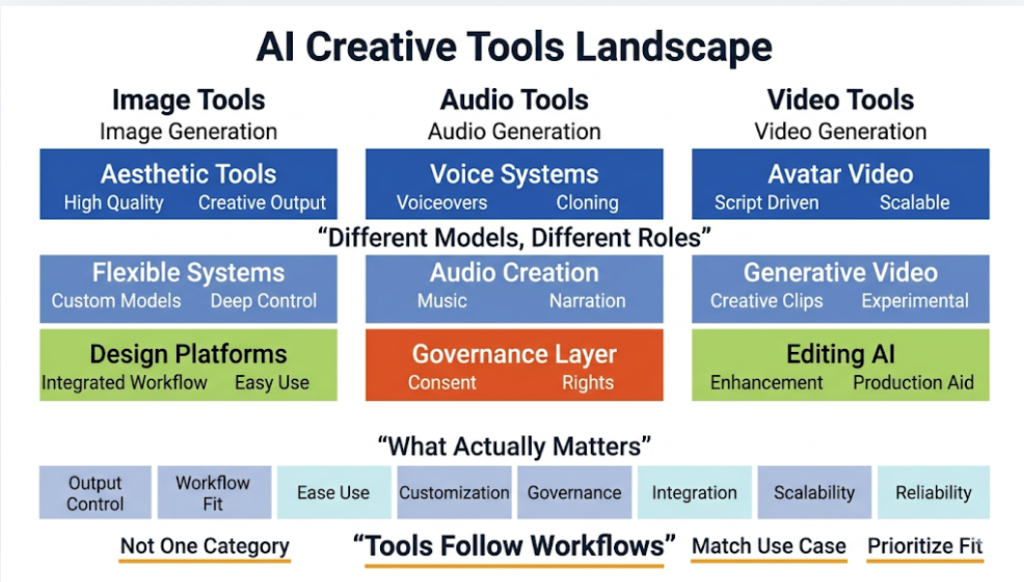

The Generative AI Tool Landscape for Content Creation

The tool market for generative AI has become crowded enough that surface-level comparisons no longer help much. Every platform claims speed, quality, creativity, and productivity. Most claim enterprise readiness. Many claim multimodality. Those claims obscure a more useful truth: the market is stratifying around different operating models, not just different features.

If I were advising a professional team, I would not start by asking which tool is “best.” I would start by asking what kind of content system the team actually runs, what degree of control it needs, how much risk it can tolerate, how important collaboration and governance are, and whether the organization needs flexible foundation models or purpose-built workflow products.

That distinction matters.

Foundation models versus workflow products

Some tools are best understood as general-purpose foundation systems. These include large language model interfaces, image generation models, and multimodal platforms that can handle many task types. Their strength lies in flexibility. They support ideation, synthesis, and a wide range of prompts, but often require more user skill and stronger process design to turn raw capability into reliable production value.

Other tools are workflow products. These wrap AI inside a more structured content use case such as:

- Script-to-video

- Voiceover generation

- Design assistance

- Blog drafting

- Meeting summarization

- Social asset generation

Their strength lies in speed to use cases. They often feel easier to adopt because they package templates, controls, collaboration features, and domain-specific UX around the model.

Professionals need both categories, but for different reasons. Foundation tools support strategic flexibility, while workflow products reduce operational friction.

Text generation tools

Text remains the most mature and operationally embedded category. That is not surprising. Language sits at the center of almost every business process, and text generation entered the market before most other modalities became commercially usable at scale.

General-purpose language platforms

The dominant general-purpose platforms include systems such as ChatGPT, Claude, Gemini, and Microsoft’s AI-enabled productivity ecosystem, each of which plays a different role in ChatGPT-driven and AI-assisted marketing workflows. Their strengths tend to include broad reasoning support, conversational iteration, summarization, drafting, planning, and increasingly strong multimodal capabilities.

These platforms often become the first entry point for organizations because they are versatile. For example:

- A strategist can use them for market framing

- A writer can use them for outlining

- A consultant can use them for synthesis

- A sales team can use them for account preparation

- A support team can use them for response drafting

Their utility comes from range.

But that same range can become a weakness if teams mistake general capability for production specialization. A general model can draft many kinds of content, but it may not handle brand governance, publishing workflows, approval chains, or campaign-level collaboration especially well without additional layers.

Marketing-specific writing tools

Tools such as Jasper, Copy.ai, Writesonic, and related platforms emerged by narrowing the problem. Instead of trying to be universal language environments, they focused on high-frequency commercial writing workflows such as:

- blog creation

- ad copy

- landing page copy

- email campaigns

- product descriptions

- social media captions

- campaign variants

Their appeal lies in speed, templates, workflow alignment, and in some cases stronger support for marketing operations. For some teams, especially those with less internal AI maturity, a more structured platform can outperform a more powerful but more open-ended foundation model because it reduces ambiguity and lowers the skill threshold.

What professionals should actually compare

When evaluating text tools, I care less about the vendor’s benchmark claims and more about:

- controllability of outputs

- support for style and brand consistency

- collaboration and review flows

- quality of long-context handling

- ability to ingest source materials

- language support and localization performance

- privacy and data handling

- integration with the rest of the content stack

- quality of structured outputs

- consistency under repeated production use

A model that writes an elegant sample paragraph in a demo means very little. What matters is whether it can support a repeatable editorial workflow without degrading quality or creating hidden risks.

Image generation platforms

Image generation has matured into a three-tier market. At the top are premium creativity tools known for aesthetic strength. In the middle are flexible open or semi-open systems with broad customization possibilities. At the workflow layer are design platforms that integrate image generation into broader creative tooling.

High-aesthetic platforms

Midjourney became influential because it produced visually compelling results that many users found more artistic and more immediately impressive than competing systems. It became a favorite for concept art, moodboards, campaign ideation, and exploratory creative work.

That strength comes with tradeoffs. Many teams love the aesthetic quality, but production teams often need more than beauty. They need reproducibility, layout control, collaboration, asset management, editability, and clearer integration with enterprise workflows.

Flexible generative systems

Stable Diffusion and the broader open-model ecosystem matter because they introduced flexibility, custom model development, and the possibility of private deployment or deeper customization. For professionals who need more control, especially around fine-tuning, internal workflows, or bespoke style environments, open systems have strategic value.

The tradeoff is complexity. Flexibility often requires more technical capability, more infrastructure, and more process maturity.

Design-integrated platforms

Canva AI, Adobe Firefly, and related tools represent a different model. They embed generation inside broader design environments that professionals already use. For many teams, this matters more than raw model novelty. If a designer can generate, edit, place, resize, brand-align, and export assets from within one workflow, adoption becomes much easier.

That is one of the recurring themes in generative AI. The best standalone model is not always the best business tool. Workflow gravity matters.

Audio generation and voice platforms

Audio tools have advanced quickly, especially in voice synthesis, dubbing, and synthetic narration.

Voice generation and cloning

Platforms such as ElevenLabs, Murf, Lovo, and Resemble have helped establish synthetic voice as a real commercial medium. These systems support:

- voiceovers

- narration

- multilingual dubbing

- custom voice development

- pronunciation controls

- audio for training and support materials

The best systems now produce speech that sounds natural enough for many professional use cases, especially if the listener does not know in advance that the voice is synthetic. That has enormous practical value in training, e-learning, product education, customer communication, and internal media.

Audio quality is only part of the decision

Professionals should not evaluate these tools only on realism. They also need to examine:

- consent and authorization mechanisms

- rights management

- language coverage

- emotional range

- editing flexibility

- alignment with localization workflows

- enterprise safeguards

- watermarking or detection features

- contractual protections

The strongest audio platforms will not simply offer realism. They will offer governance.

Video generation platforms

Video tools divide broadly into three categories: avatar-based platforms, generative visual platforms, and AI-assisted editing environments.

Avatar-first platforms

Synthesia remains one of the clearest examples of a commercially successful AI video workflow product because it solves a specific, repeatable problem. Organizations need presenter-style videos for onboarding, training, internal communication, support, and external education. Synthesia replaces the logistics of filming with a script-driven synthetic presenter workflow.

This is not cinematic storytelling. It is scalable communication infrastructure. That distinction matters because it explains why avatar platforms have found stronger enterprise traction than many more visually ambitious tools.

Generative visual video systems

Runway and newer model families represent the more open-ended side of AI video. These tools can generate clips, transform footage, alter scenes, create effects, and support experimental visual workflows. They are creatively exciting and strategically important. They also remain less operationally stable for many mainstream business uses than the hype often suggests.

Editing and enhancement platforms

A third class of tools uses AI to enhance video production rather than replace it. These systems provide transcription, subtitle generation, background removal, reframing, voice cleanup, overdubbing, clip extraction, or rough-cut assistance. In practice, these may deliver more consistent value to many organizations than full generative video.

Professionals should remember that AI in video is not one thing. There is a big difference between synthetic presenter automation, cinematic clip generation, and AI-assisted post-production.

The real tool selection question

When clients ask me which generative AI platform they should choose, the question is usually framed too narrowly. They ask for a tool. What they actually need is a content operating model.

The right stack depends on:

- content volume

- channel mix

- language requirements

- legal and compliance constraints

- data sensitivity

- need for collaboration

- need for workflow standardization

- quality tolerance

- creative ambition

- technical capability

- budget

A solo creator, a global consumer brand, a regulated B2B company, an online education provider, and a media newsroom should not buy the same stack for the same reasons.

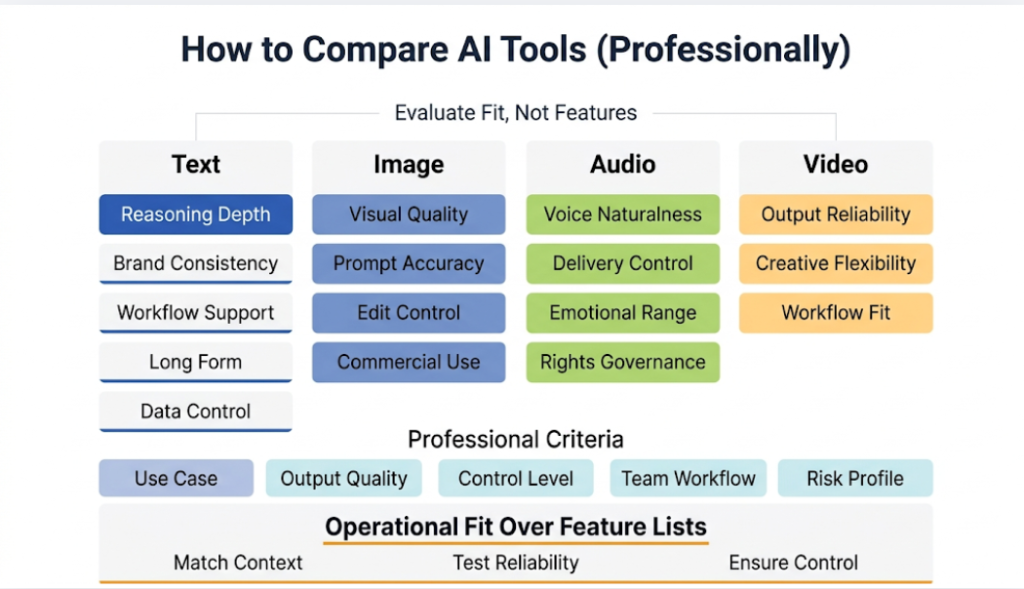

Comparing Generative AI Tools by Category

I want to go one level deeper here because most tool comparisons are still too shallow. They compare lists of features rather than operational fit.

Comparing text tools

For professional text workflows, I would assess tools along five dimensions.

Breadth of reasoning and synthesis

General-purpose platforms tend to perform best when the task requires synthesis, strategic interpretation, flexible iteration, and long-form reasoning support. These are useful for research synthesis, complex outlines, workshop preparation, or scenario development.

Brand and voice consistency

Marketing-oriented writing tools often perform better when the task requires structured, repeatable copy tied to a known commercial format. Their real value often comes not from raw language power but from packaging AI into a brand-aware operational flow.

Collaboration and workflow support

A lone strategist can work effectively inside a general model interface. A team producing large volumes of approved content often needs shared workflows, project structures, versioning, templates, permissions, and content operations features.

Long-form performance

Many tools can produce decent short-form outputs. Far fewer can support sophisticated long-form writing without collapsing into repetition, generic structure, or coherence drift. Professionals producing serious articles, white papers, or reports need to test this carefully.

Data handling and enterprise control

This becomes decisive in client work, confidential research, regulated communication, and internal knowledge applications.

Comparing image tools

In image generation, I would focus on four professional criteria.

Aesthetic quality

This still matters. A tool that produces dead, synthetic, or visually incoherent results will not support serious creative work. But aesthetic quality should be judged in relation to the intended use case, not in the abstract.

Prompt responsiveness

Some tools are better at faithfully translating complex written prompts into visual relationships. Others produce striking images but drift from the prompt. Depending on the workflow, either behavior may matter more.

Editability and control

For campaign work, product work, and brand work, editability is often more important than raw first-pass impressiveness. Teams need inpainting, outpainting, reference image control, style continuity, and integration with broader design workflows.

Commercial usability

A beautiful image generator is not automatically a practical commercial tool. Professionals need to understand licensing, indemnity, integration, asset workflow, and policy terms.

Comparing audio tools

Audio tools should be compared not only on realism but also on business fit.

Naturalness and intelligibility

Synthetic voices need to sound credible and remain understandable across accents, pacing variations, and multilingual usage.

Emotional range and delivery control

Training content may require neutral clarity. Marketing content may require persuasion and tonal warmth. Entertainment content may require expressive variability. The same tool rarely excels equally across all these demands.

Voice rights and governance

This category deserves unusually close attention. Professionals should ask who owns custom voices, how authorization is verified, what happens when a voice model is disputed, and how misuse is prevented.

Comparing video tools

Video comparisons need to separate aspiration from operational readiness.

Reliability for repeatable business output

Avatar systems often win here because the use case is structured and predictable.

Creative flexibility

Open-ended generative video tools win here, but often with lower reliability.

Integration with existing production workflows

AI video tools are most useful when they fit into real content production environments rather than forcing teams to rebuild the entire workflow around the tool.

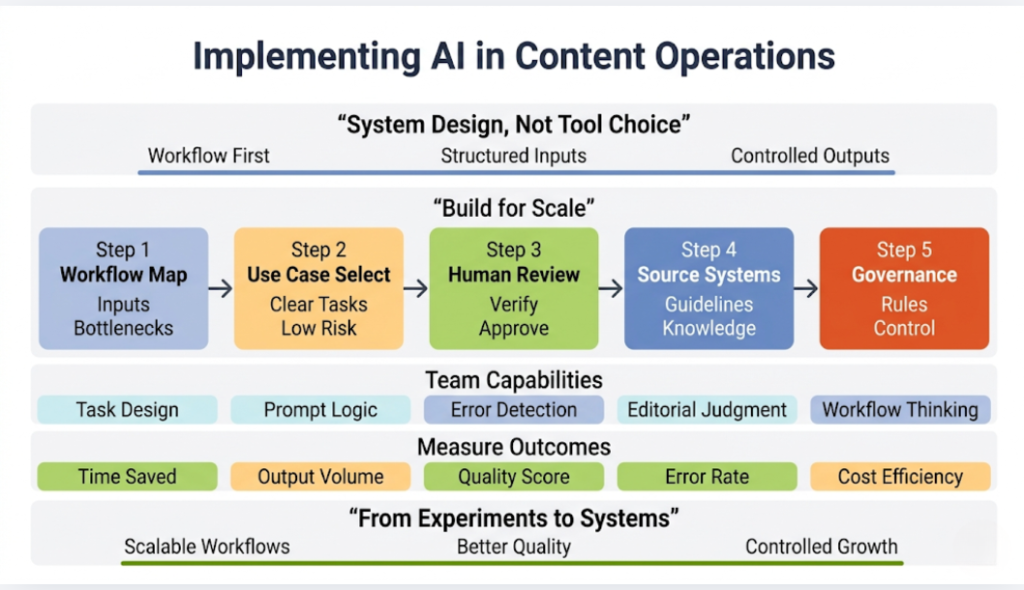

How to Implement Generative AI in Content Teams and Client Environments

This is where many organizations get stuck. They experiment enthusiastically, collect a few impressive examples, then fail to turn those examples into durable operational value. The problem is rarely that the models are useless. The problem is that implementation is treated as a tool decision rather than an organizational design challenge.

Start with workflow diagnosis, not vendor enthusiasm

The first thing I would do with any team is map the content workflow as it actually exists, not as leadership imagines it exists.

- Where does content creation begin?

- What inputs drive it?

- Where do delays occur?

- Which steps are repetitive?

- Which steps require high judgment?

- Where do quality failures happen?

- Which outputs need localization?

- Which assets feed other assets?

- Where are legal reviews involved?

- Where does content actually get reused?

Without that map, organizations adopt AI randomly. They insert it wherever someone happens to try a prompt rather than where it creates the most leverage.

Identify high-leverage, low-chaos use cases first

The best initial use cases usually share a few traits:

- clear inputs

- clear outputs

- repetitive structure

- moderate risk

- easy quality evaluation

- measurable time savings

This is why many teams see success first with:

- meeting summaries

- product descriptions

- campaign variants

- email draft support

- support documentation

- transcript summarization

- training script generation

- blog outline development

- asset repurposing

These use cases help teams build AI fluency without immediately exposing the organization to the full risk of unsupervised public-facing generation.

Build human review into the system from the start

One of the easiest ways to derail trust in AI adoption is to imply that human review is temporary and will disappear once the tools improve. In professional content environments, human review is not a transitional phase. It is a structural requirement.

The question is not whether humans remain involved. The question is where their attention creates the most value.

In a mature AI-assisted workflow, humans should focus on:

- strategic framing

- factual verification

- brand alignment

- legal and compliance review

- narrative coherence

- quality selection

- final approval

If a team treats AI outputs as publish-ready by default, quality will drift and stakeholder trust will erode.

Create source-of-truth systems

High-performing AI content systems depend on strong source material. If a company wants better AI outputs, it often needs better internal content architecture first.

That means organizing:

- approved messaging

- product claims

- style guides

- terminology rules

- customer evidence

- knowledge repositories

- policy standards

- campaign frameworks

- localization instructions

AI becomes much more valuable when it operates against reliable source systems rather than against vague prompts and scattered documents.

Define governance explicitly

Professionals often underestimate how quickly AI use spreads informally. One marketer finds a useful tool, then the sales team starts using it, then support experiments with another platform, then an agency partner introduces a third. Before long, the organization has AI everywhere and governance nowhere.

At minimum, serious teams need clear guidance on:

- approved tools

- data sharing rules

- review responsibilities

- disclosure standards

- restricted use cases

- IP and licensing checks

- voice and likeness permissions

- escalation paths for questionable outputs

- recordkeeping for client-sensitive work

Governance should not smother experimentation. It should make experimentation safe enough to scale.

Train for judgment, not just prompting

A lot of AI training still focuses on prompt tips. That is better than nothing, but it is incomplete. Professionals need training in:

- task decomposition

- source selection

- claim verification

- prompt structuring

- error recognition

- editorial refinement

- model selection by use case

- workflow design

- bias awareness

- legal and policy boundaries

The strongest practitioners are not simply good prompters. They are good editors, good evaluators, and good system thinkers.

Measure the right outcomes

Organizations often make one of two mistakes. They either measure nothing, or they measure only speed.

Speed matters, but it is not enough. According to SEO.com AI marketing research (2025), 93% of marketers report that AI helps them create content faster, which reinforces the productivity gains, but speed alone does not guarantee quality or effectiveness. Serious implementation should track:

- time saved

- output volume

- revision load

- quality scores

- publishing velocity

- campaign performance

- localization turnaround

- cost per asset

- error rates

- stakeholder satisfaction

The real goal is not just faster content. It is better content operations.

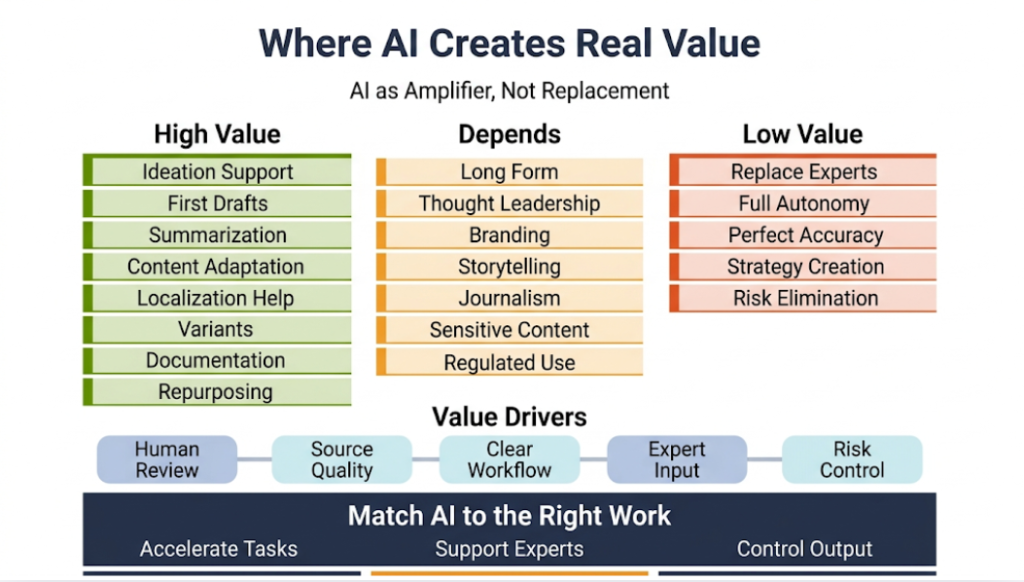

Where Generative AI Creates Real Value and Where It Does Not

The market still contains too much magical thinking, so it helps to say this directly. Generative AI creates tremendous value in some parts of the content lifecycle. In other parts, its value is much weaker than vendors suggest.

Where it clearly creates value

It tends to perform strongly in:

- ideation support

- first-pass drafting

- structured transformation

- summarization

- adaptation across formats

- localization assistance

- transcript and note conversion

- variant generation

- routine internal documentation

- training content production

- asset repurposing

These are workflows where speed and structure matter, the error surface is manageable, and human review can efficiently improve outputs.

Where value is more conditional

It can create value in:

- long-form authoritative writing

- public thought leadership

- high-end visual branding

- emotionally nuanced storytelling

- journalism

- sensitive client communication

- regulated content

But in these areas, value depends heavily on source quality, expert oversight, model choice, process maturity, and the organization’s standards. AI can help. It just cannot safely carry the burden alone.

Where it often disappoints

It often disappoints when organizations expect it to:

- replace expertise

- invent differentiated strategy from thin air

- produce final-ready premium content without review

- maintain perfect factual reliability

- understand organizational nuance automatically

- protect them from legal or reputational risk

The better mental model is this: generative AI is an amplifier and accelerator inside content systems. It is not a substitute for expertise, judgment, or accountability.

Ethical and Legal Considerations Professionals Cannot Treat as Side Issues

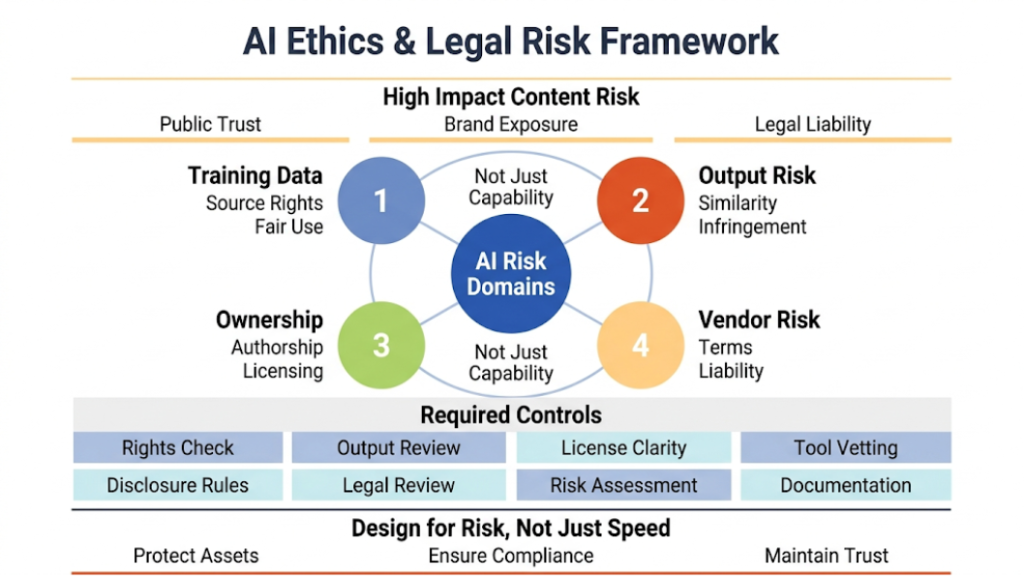

If the first two parts of this article focused on capability, workflow, and value creation, this final section needs to focus on risk with equal seriousness. Too many organizations still treat ethics and legal review as peripheral layers that can be applied after the tool decision has already been made. In practice, the opposite is true. For many serious professional uses of generative AI, the legal and ethical landscape should shape the use case from the beginning.

This is especially important in content creation because content sits close to public trust, brand reputation, rights ownership, and social influence. When a model generates a weak internal summary, the damage may be limited. When it generates misleading customer claims, copyright-sensitive visuals, synthetic endorsements, or false news-like content, the consequences can escalate quickly.

I want to look at the major issues directly.

Copyright, training data, and ownership

Copyright remains one of the most contested areas in the entire generative AI ecosystem. Professionals cannot afford to speak about it casually.

At the center of the debate are several distinct but related questions:

- Can AI developers lawfully train models on copyrighted materials without explicit permission?

- When an output resembles existing protected work, how should infringement be assessed?

- Who owns AI-generated content?

- What rights, if any, does a client receive when a vendor-generated asset enters a campaign or publication workflow?

- How do licensing terms differ across platforms?

These questions do not produce one clean answer because they arise at different layers of the stack.

The training-data controversy

A major share of current litigation and policy debate focuses on the training process itself. Rights holders argue that model developers ingested protected books, articles, images, code, music, and other materials without consent or compensation. AI companies often argue that training is transformative, non-expressive in a direct sense, and should fall under fair use or analogous doctrines depending on jurisdiction.

Whatever one thinks of the legal arguments, the practical takeaway for professionals is straightforward. The training-data controversy is not theoretical. It affects vendor risk, procurement, platform policy, and potentially downstream client exposure.

This does not mean every use of a model is automatically unlawful or unsafe. It means responsible teams need to understand that the model supply chain itself may carry unresolved legal uncertainty.

Output-level infringement risk

Even if one brackets the training question, output risk remains. An image that too closely resembles a known character, a living artist’s distinctive style as used in a commercial context, or an existing branded asset may create exposure. A text output that reproduces protected material too closely may do the same.

This is where professionals often make a serious mistake. They assume that because a model generated the output probabilistically, the output is legally insulated. That assumption is dangerous. Copyright analysis still turns on the content of the output, not on whether a machine participated in producing it.

The correct question is not “Did AI make this?” The correct question is “What rights issues does this output actually raise?”

Ownership of AI-generated work

Ownership is another area where professionals need precision. In some jurisdictions, copyright protection depends on human authorship. That creates complications for purely machine-generated works. In practice, commercial value may still exist even when copyright protection is uncertain, but the uncertainty matters. It affects exclusivity, enforceability, and contractual expectations.

For clients, this becomes especially important in commissioned work. If a campaign asset relies heavily on AI generation, the agency or creator should be very clear about:

- how the asset was made

- which tools were used

- what license terms apply

- what warranties can or cannot be made

- what originality review took place

Professionals who fail to address this early often create future problems for legal teams and procurement teams.

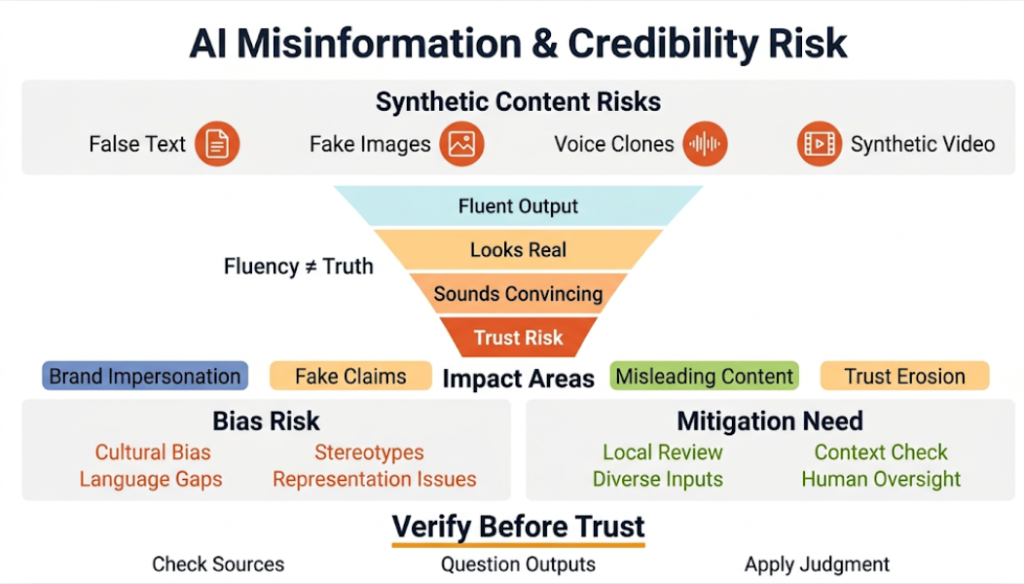

Misinformation, synthetic media, and credibility risk

Generative AI dramatically lowers the cost of producing plausible falsehoods. That is one of the most consequential facts in the market.

Text models can generate convincing but incorrect explanations, summaries, or narratives. Image tools can create fabricated scenes that resemble documentary evidence. Voice synthesis can imitate real people. Video systems can produce synthetic footage that non-experts may read as authentic. Even when the outputs are not perfect, they may be good enough to mislead in the conditions that matter most, especially when speed, emotion, and scale are involved.

Why content professionals should care even outside journalism

Some teams still assume misinformation is a problem mainly for politics, elections, and news organizations. That is too narrow. Any professional content operation that communicates with customers, employees, students, investors, or the public sits inside an environment where synthetic content can affect trust.

A brand can be impersonated.

An executive voice can be cloned.

A fake customer statement can circulate.

A synthetic screenshot can support a false claim.

An AI-generated summary can distort the meaning of a real report.

A synthetic support interaction can mislead vulnerable users.

Even if your own team uses AI responsibly, the surrounding information environment may not. That means content professionals now need a stronger verification culture.

Why fluency makes the problem worse

Older forms of low-quality automation often failed visibly. The writing sounded robotic. The visuals looked crude. The fraud cues were easier to spot. Generative AI changes that because it produces outputs with enough fluency to reduce skepticism.

This is especially dangerous for experts because expertise can produce overconfidence. People who understand a domain may still over-trust a polished summary or a plausible synthetic asset if it appears to fit existing expectations.

Professional teams need to train themselves and their clients out of this trap. Fluency is not truth. Realism is not authenticity. Synthetic polish is not evidence.

Bias, representation, and cultural distortion

Bias remains one of the most persistent and under-discussed content risks in generative AI. The problem is not just that models produce offensive stereotypes, although that still happens. The deeper issue is that generative systems often encode dominant patterns from their training data and reproduce them in ways that shape representation, language, and perceived normality.

This matters directly in content creation because professional outputs do more than inform. They frame markets, audiences, identities, aspirations, and social roles.

How bias appears in practice

In text generation, bias can appear through tone assumptions, default examples, normative framing, geopolitical blind spots, or uneven treatment of cultures and languages. In image generation, bias can appear through stereotyped portrayals of:

- Professions

- Beauty norms

- Class signals

- Ethnicity

- Gender expression

- Regional aesthetics

In voice and video systems, bias can appear through:

- Accent quality

- Linguistic support

- Representational defaults

- Uneven realism across different populations

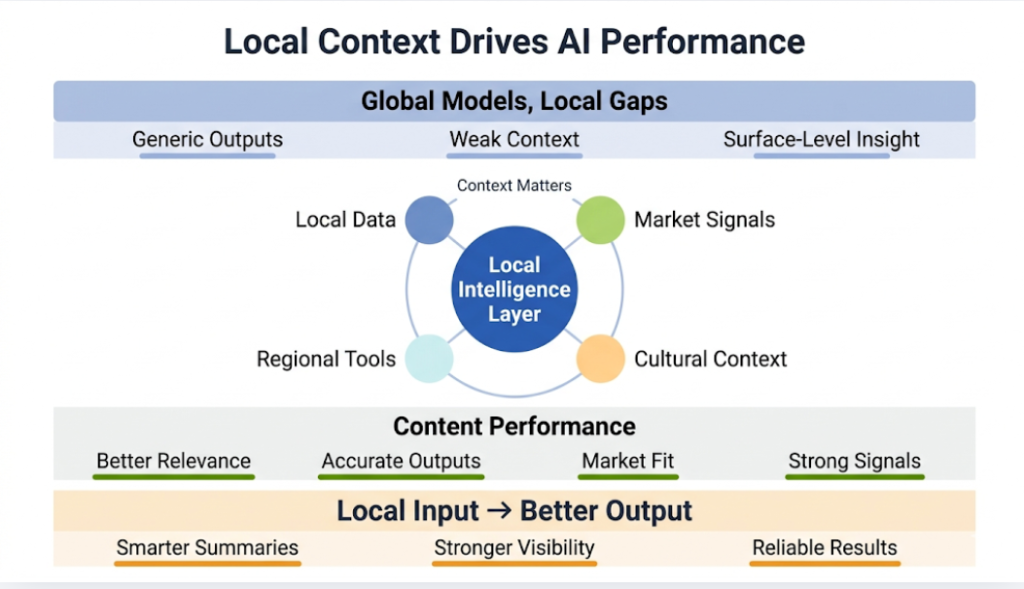

Professionals who work globally need to treat this as a business issue as much as an ethical issue. If a content system consistently defaults to Western norms, Anglophone phrasing, or culturally narrow visuals, it will produce weaker work and create avoidable alienation in international contexts.

Why mitigation is not a solved problem

Vendors often speak about bias mitigation as if it were largely under control. It is not. Progress has been real, but the challenge is structural. Models trained at scale on internet-shaped data inherit the imbalances of that ecosystem. Fine-tuning and policy layers can help. They do not erase the underlying issue.

This is one reason local review remains indispensable for global content operations. No central model will understand every market’s cultural sensitivities, historical context, or symbolic landscape well enough to be trusted blindly.

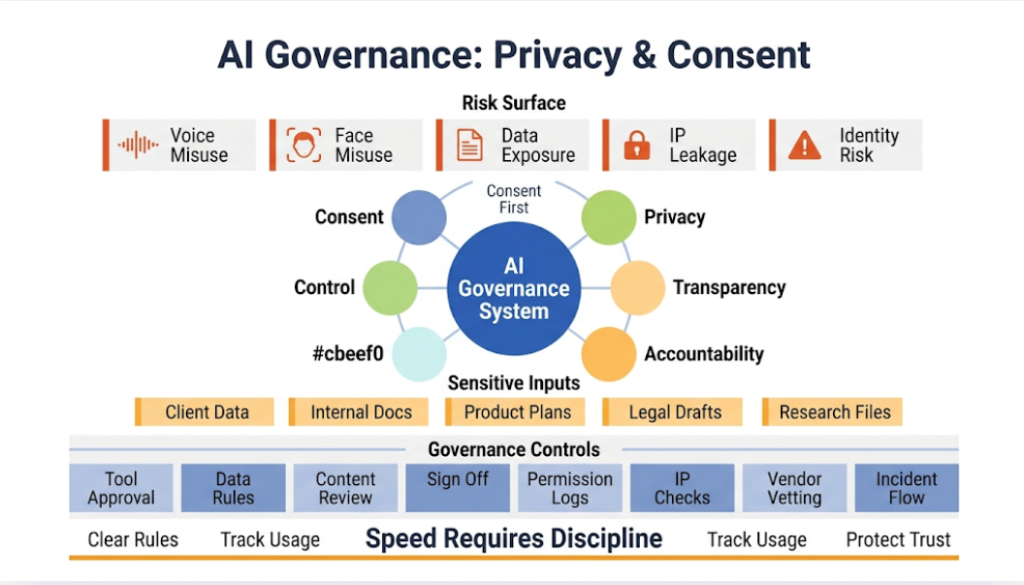

Privacy, consent, and likeness rights

Privacy issues in generative AI extend well beyond standard data governance, although standard governance still matters. Additional sensitivities arise because content creation increasingly involves:

- Synthetic voices

- Synthetic faces

- Internal data

- Customer materials

- Uploaded source assets

Voice and face synthesis make consent non-negotiable

If an organization uses voice cloning, avatar generation, or synthetic likeness tools, it should operate from a very simple principle: no person’s voice, face, or identifiable style should enter a generation workflow without clear authorization and documented rights.

That principle should not be controversial, but the market still behaves as if convenience can outrun consent. It cannot. The reputational downside is too high, and the legal environment is moving toward greater scrutiny.

Internal data exposure in content workflows

Many teams begin using public or semi-public AI tools for convenience, then feed them:

- client documents

- product roadmaps

- customer data

- unpublished research

- legal drafts

- campaign plans

- internal comms

- proprietary code

- confidential recordings

That can create serious exposure if the organization does not understand how the platform stores, logs, trains on, or otherwise processes data. The issue is not abstract. The content workflow is often the first point where sensitive material gets casually pushed into AI interfaces without procurement review.

Professionals need to normalize disciplined tool use. Creative speed is not worth accidental data leakage.

Regulation and governance trends

The regulatory environment around AI is evolving unevenly across jurisdictions, but the direction of travel is clear. Governments and regulators are increasingly focused on transparency, safety, accountability, and sector-specific risk controls.

I would not advise professionals to wait for perfect legal clarity before building governance. That would be a mistake. The better approach is to assume that stricter expectations are coming and build operating discipline now.

What good governance looks like in practice

At a minimum, mature content organizations should know:

- which AI tools are approved

- what data can and cannot be entered

- which content types require extra review

- who signs off on public AI-generated material

- how voice and likeness permissions are documented

- how IP-sensitive outputs are checked

- how localization review is handled

- how incidents are escalated

- how vendors are vetted

Good governance does not require a bureaucracy for every prompt. It requires clarity, traceability, and proportionality.

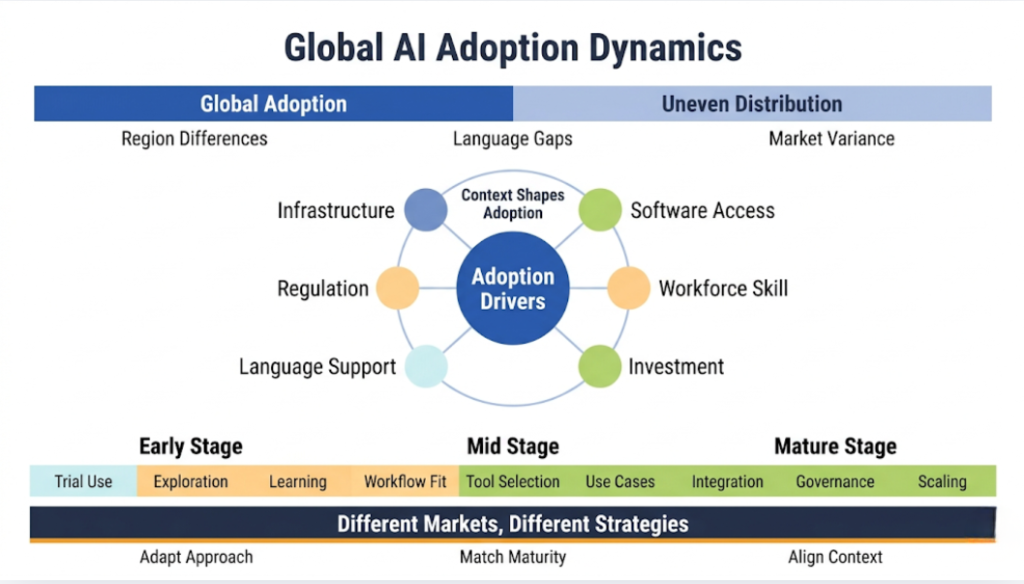

Global Impact and Adoption: Why Geography, Language, and Market Structure Matter

One of the laziest habits in AI commentary is to talk as if the market were culturally and geographically uniform. It is not. Generative AI is global, but it does not unfold evenly. Adoption patterns, use cases, constraints, and opportunities vary sharply by region, language environment, regulatory climate, infrastructure base, and industrial structure.

Professionals who work with international clients need to understand this. Otherwise, they will mistake one market’s trajectory for the whole world’s trajectory.

Adoption is global, but not evenly distributed

Generative AI has spread with remarkable speed. In a very short period, it moved from research breakthrough to mainstream business conversation and consumer experimentation. But high-level adoption numbers can hide important asymmetries.

More digitally advanced economies often show faster uptake because they already have:

- stronger cloud infrastructure

- higher software penetration

- larger digital services sectors

- better device access

- more AI-literate workforces

- more venture and enterprise investment

- fewer language support barriers when English dominates the workflow

That said, some smaller and highly digital states have outpaced larger economies in practical adoption because they combine infrastructure readiness with aggressive experimentation and national-level ambition.

Why enterprise maturity differs by region

In some markets, organizations are already moving from experimentation to governance and integration. In others, teams are still in ad hoc trial mode. That affects what clients need. In a mature market, the discussion may focus on workflow redesign, vendor selection, and legal standards. In a less mature market, the immediate need may be education, access, and fit-for-purpose use cases.

Professionals should avoid assuming that every client sits at the same point on the adoption curve.

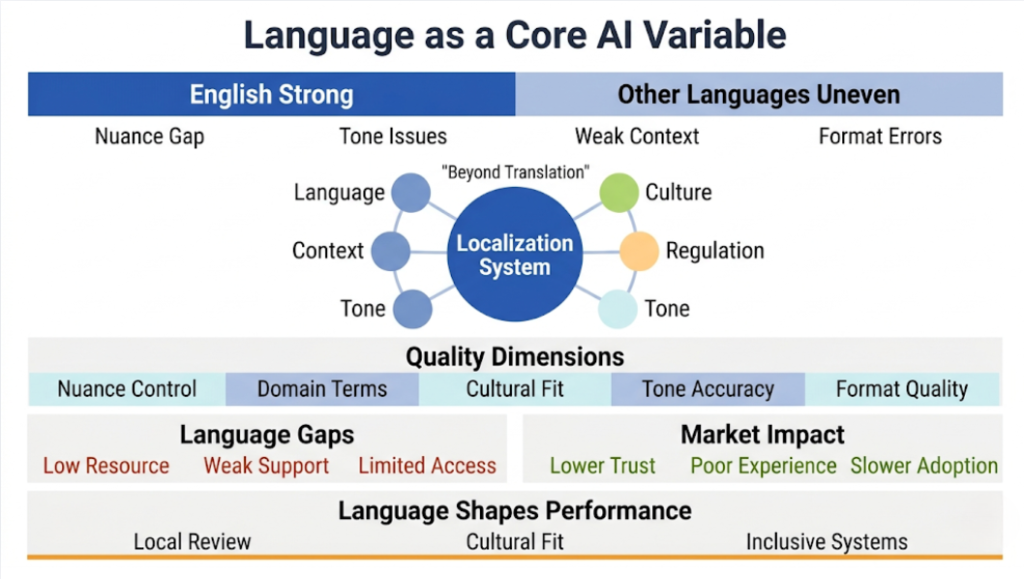

Language remains one of the most important structural variables

Language support is a central issue in generative AI for content creation. Much of the early strength in generative AI emerged in English-heavy environments because training data, interfaces, product development, and user demand all concentrated there first.

That creates several consequences.

Uneven quality across languages

A model may be excellent in English and mediocre in another language. Even when it supports many languages, quality may vary in:

- nuance

- idiomaticity

- domain vocabulary

- cultural resonance

- factual support

- script handling

- tone control

- formatting quality

Professionals running multilingual content programs should never assume that a model’s English performance will transfer cleanly into other languages. It often does not.

Localization is not just translation

This is another area where clients often oversimplify. Generative AI can help with translation, but professional localization requires much more than direct linguistic conversion. It requires market knowledge, cultural adaptation, claims sensitivity, regulatory awareness, tone judgment, and channel-specific conventions.

AI can accelerate localization dramatically, especially for first-pass adaptation. But local review remains essential if the content matters.

Low-resource languages risk being left behind

Models often perform worst in low-resource languages, dialects, and culturally underrepresented contexts. This creates a genuine digital inequality issue. If the most powerful content systems perform best in dominant languages, they will channel more productivity gains, more knowledge access, and more media power into already advantaged ecosystems.

That is not only a social concern. It is also a commercial one. Markets with weaker language support may receive lower-quality AI products and less suitable content experiences, which slows adoption and reduces trust.

Regional ecosystem differences

The structure of the AI market also differs by region.

North America and parts of Europe

These markets have driven a large share of enterprise experimentation, B2B integration, and vendor ecosystem development. They also tend to host stronger legal, policy, and procurement scrutiny. As a result, many organizations in these markets are moving from curiosity to systems thinking.

East Asia

East Asia matters enormously, but not always through the same product pathways as the U.S. market. Domestic platforms, platform regulation, language ecosystems, and local champions shape adoption differently. In some countries, mobile-first behavior and platform integration may drive uptake faster than standalone enterprise tooling.

Middle East and digitally ambitious smaller states

Some countries have moved unusually fast because leadership sees AI as strategic national infrastructure. These markets can become important testbeds for rapid adoption, public-sector experimentation, and cross-sector deployment.

Global South and emerging markets

The story here is more varied than many commentators admit. There is real enthusiasm and rapid experimentation in many regions, but infrastructure, affordability, language support, training access, and device constraints can still slow scalable use. At the same time, because some content systems are less burdened by legacy workflows, these markets may leapfrog in specific use cases once tools become more accessible and better localized.

The global opportunity and the global risk

The opportunity is obvious. Generative AI can reduce production costs, expand access to communication tools, support multilingual creation, and enable smaller organizations to produce more sophisticated content than their budgets once allowed.

The risk is just as real. The technology can also widen existing gaps:

- between high-resource and low-resource languages

- between well-governed and poorly governed media environments

- between organizations with strong data and those without it

- between markets served by quality local adaptation and those forced into generic imported content systems

Professionals should not assume that scale alone will solve these issues, particularly as Google AI Overviews increasingly shape how content is surfaced and summarized. Better local data, local review, regional tool development, and stronger market-specific practices will matter.

What the Future of Content Creation Actually Looks Like

A lot of writing about the future of generative AI falls into futurist cliché. I want to avoid that and offer a more grounded view.

The future of content creation is not one where humans disappear and models autonomously produce all meaningful communication. It is also not one where AI remains a novelty layer used only for brainstorming. The actual future sits between those extremes.

Content workflows will become increasingly multimodal

The most important structural shift is that content workflows will no longer be built around one modality at a time. A source idea may begin as a text brief, become a visual concept, turn into a narrated video, produce short-form derivatives, feed a synthetic voice workflow, and then return to text through transcripts, documentation, and search surfaces.

Professionals will need to think in content systems rather than channel silos. Teams that still separate writing, design, audio, video, and localization into rigid disconnected processes will move more slowly than teams that build integrated multimodal pipelines.

The premium will move toward judgment and orchestration

As generation gets cheaper, selection gets more important. As first drafts become abundant, differentiated thinking becomes more valuable. As adaptation becomes faster, strategic coherence becomes harder and more essential.

This means the professionals who win will not be the ones who merely know how to invoke a model. They will be the ones who know how to:

- define the right task

- structure the right source material

- choose the right tool

- guide the right workflow

- detect the wrong output

- shape the final asset

- align the result with business and audience needs

In other words, the future belongs less to raw prompting and more to editorial orchestration.

Governance will become a competitive advantage

For a while, governance was framed as something conservative organizations did to slow things down. I think that view will age badly. In practice, the organizations that establish clear AI operating rules early are often the ones that can scale faster later. They create trust. They reduce rework. They prevent avoidable incidents. They make procurement easier. They reassure clients. They enable broader deployment.

Governance done well does not kill creative speed. It makes creative speed sustainable.

Expertise will matter more, not less

This is perhaps the most important point in the entire article. Generative AI changes the distribution of labor, but it does not erase the value of expertise. It increases the importance of having real knowledge embedded somewhere in the system.

If no expert shapes the brief, checks the claims, evaluates the framing, and decides what deserves publication, then AI simply multiplies noise. If expert knowledge does shape the process, AI can dramatically increase how far that knowledge travels and how efficiently it gets repurposed across formats and markets.

That is why the strongest future model is not AI instead of experts. It is AI in the hands of experts who know what they are doing.

Frequently Asked Questions (FAQ)

How should we budget for generative AI in a marketing organization?

Most teams approach this incorrectly by allocating budget only to tools. In practice, the cost structure spans three layers: tools, people, and process. Tools are often the cheapest component. The real investment sits in training, workflow redesign, quality control, and integration. I usually advise clients to start with a small, controlled budget tied to specific use cases, then expand based on measurable ROI rather than upfront commitment.

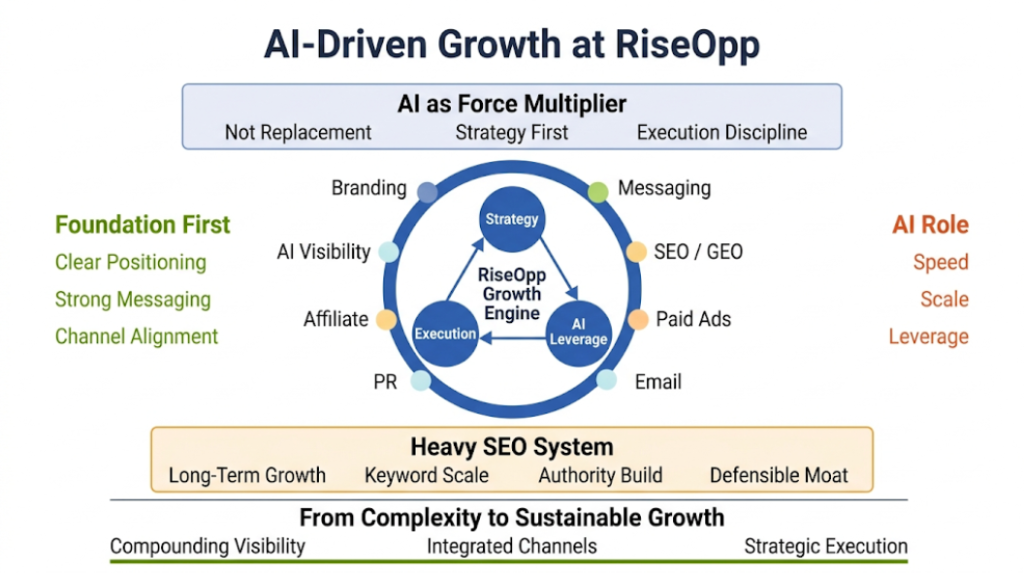

How do we decide whether to build internal AI capability or rely on external partners?

This depends on how central content and marketing are to your competitive advantage. If content is core to your growth engine, you should build internal capability supported by external expertise. If it is more of a supporting function, working with a partner can be more efficient. The most effective model in many cases is hybrid, where internal teams own strategy and domain knowledge, while external partners provide systems, execution scale, and specialized expertise.

How do we prevent AI-generated content from becoming generic or indistinguishable from competitors?

This is one of the most common failure points. The solution is not better prompts alone. It requires stronger inputs. That means original insights, proprietary data, clear positioning, and a defined point of view. AI can structure and scale content, but differentiation must come from the underlying thinking. If every team uses the same tools with the same generic inputs, the outputs will converge.

What skills should marketing teams prioritize developing in an AI-driven environment?

The priority is shifting away from pure production skills toward higher-order capabilities. Teams should focus on strategic thinking, editorial judgment, data interpretation, prompt structuring, workflow design, and cross-channel integration. The ability to evaluate and refine AI outputs is becoming more important than the ability to produce first drafts manually.

How do we handle version control and consistency when AI is generating large volumes of content?

This becomes a real issue at scale. Teams need structured content systems, not just tools. That includes centralized messaging frameworks, reusable templates, approval workflows, and version tracking. Without these, AI increases fragmentation rather than efficiency. Content operations discipline becomes critical as output volume grows.

How do we integrate generative AI with existing marketing technology stacks?

Integration should be driven by workflow, not novelty. Start by identifying where AI can plug into existing systems such as CMS platforms, CRM systems, ad platforms, and analytics tools. Many modern tools offer APIs or native integrations. The goal is to reduce friction, not create parallel systems that teams ignore after initial experimentation.

How do we ensure AI-generated content aligns with brand voice across different teams?

Brand drift is a real risk. The solution is to formalize brand voice into usable inputs such as style guides, tone rules, example libraries, and structured prompts. Some organizations also create internal “voice models” or reference datasets. Consistency does not come from the AI itself. It comes from the constraints and references you provide to it.

What are the biggest hidden risks companies overlook when adopting generative AI?

The biggest risks are not always the obvious ones. Beyond legal and bias concerns, I often see:

- silent quality degradation over time

- over-reliance on AI for strategic thinking

- inconsistent use across teams

- lack of auditability

- unclear ownership of outputs

- accidental exposure of sensitive data

These issues do not appear immediately but can compound quickly.

How do we evaluate whether AI is actually improving our marketing performance?

You need to separate activity metrics from outcome metrics. Increased content volume or faster production does not automatically translate into better performance. Track downstream impact such as engagement quality, conversion rates, pipeline contribution, SEO growth, and customer retention. AI should improve outcomes, not just output.

Will generative AI reduce the need for marketing teams in the long term?

It will change team composition more than it will eliminate teams. Routine production roles may shrink or evolve, but roles focused on strategy, analysis, creative direction, and system design will become more important. The net effect is not necessarily fewer people, but different skill distributions and higher expectations for cross-functional capability.

How should agencies and consultants position themselves in an AI-driven market?

Agencies that rely purely on execution volume will face pressure. The ones that survive and grow will position themselves around strategy, systems, and outcomes. Clients will expect guidance on how to use AI effectively, not just access to tools. The value shifts from doing the work to designing how the work gets done.

What does success with generative AI look like over the next 2–3 years?

In the near term, success will look like operational maturity. That means:

- AI integrated into core workflows

- measurable efficiency gains

- consistent content quality

- clear governance

- strong alignment between AI usage and business outcomes

Longer term, the advantage will come from how well organizations combine AI capabilities with proprietary data, strong positioning, and disciplined execution.

Final Thoughts

Generative AI for content creation has already moved beyond experiment. It now affects how professional teams write, design, narrate, localize, train, publish, and communicate. The question is no longer whether it matters. The question is how seriously organizations are willing to engage with what it changes.

I have tried in this guide to avoid both hype and cynicism. Hype leads teams to expect too much from raw model capability. Cynicism leads them to ignore real operational leverage. The truth is more demanding than either position.