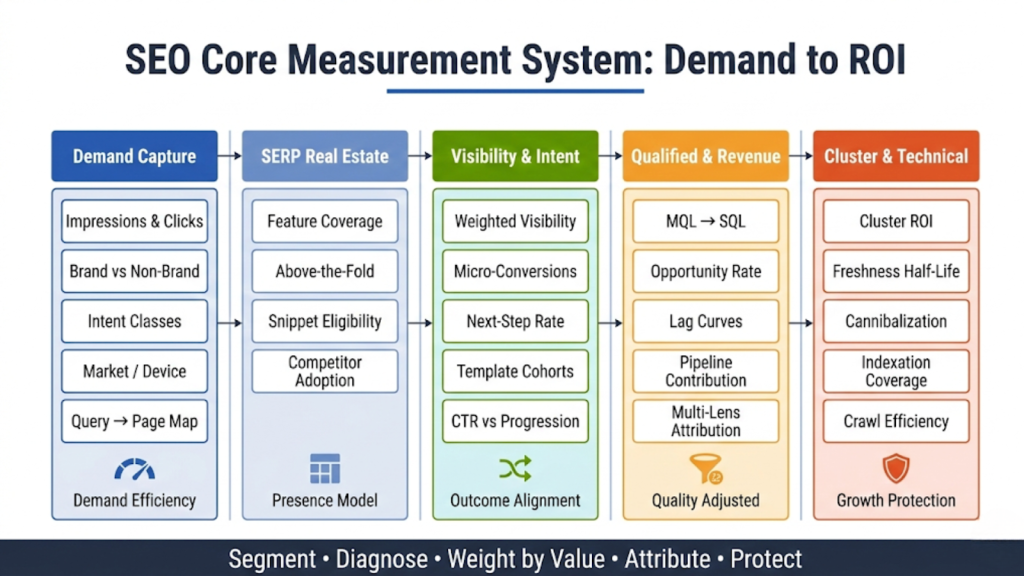

- SEO analytics connects query-level demand, on-site behavior, and CRM outcomes to measure qualified leads, pipeline, and revenue impact.

- SEO analytics requires intent-based segmentation, defined system roles across Search Console and GA4, and warehouse-based data integration.

- SEO analytics models ROI using quality-adjusted cohorts, sales-cycle lag analysis, conservative attribution, and incrementality testing.

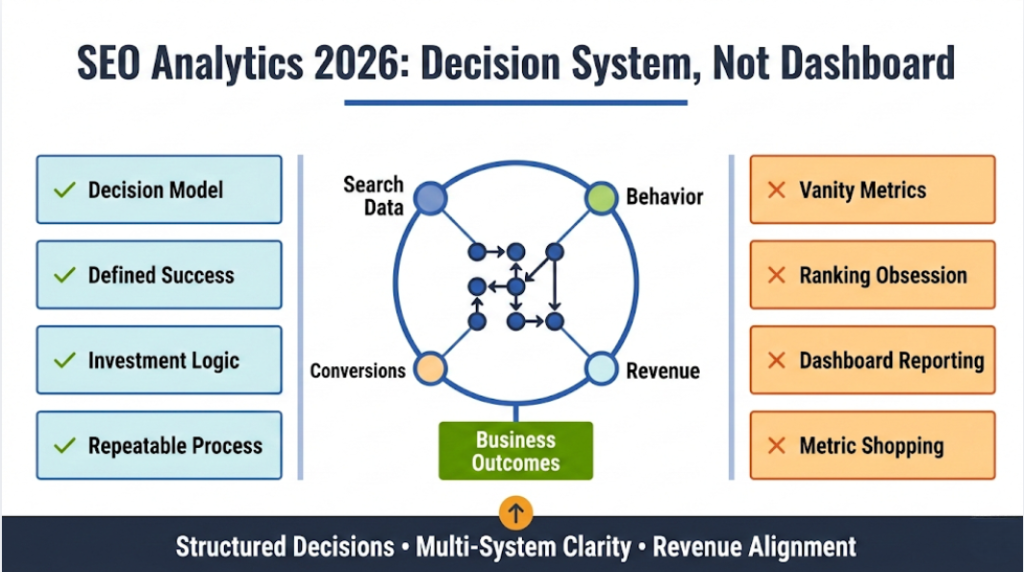

SEO analytics is no longer about tracking rankings and traffic in isolation. Modern organic growth requires a structured measurement system that connects search visibility to qualified demand, pipeline, and revenue.

In 2026, search is fragmented across SERP features, AI-generated answers, zero-click behavior, and multi-intent queries. At the same time, privacy and attribution constraints reduce observability. This makes traditional SEO reporting insufficient. What organizations need instead is a decision-driven SEO analytics framework.

This guide provides a complete operational model for SEO analytics, covering:

– what is seo analytics and how it differs from basic reporting

– how to measure seo in Google Analytics without misleading conclusions

– how to build an analytics seo report that drives action

– how to model and defend SEO ROI

– how to operationalize seo and analytics reporting across teams

If your goal is to connect organic performance to measurable business outcomes, this article outlines a system you can implement.

SEO Analytics in 2026: What It Is and What It Is Not

What is seo analytics?

When professionals ask what is seo analytics, the answer must go beyond dashboards. SEO analytics is a decision system that links organic demand capture to measurable business outcomes. It integrates search performance data, behavioral analytics, conversion quality, and revenue attribution into a structured model designed to guide investment, not simply describe activity.

A strong seo analytics practice also defines what counts as success before collecting numbers. That definition often includes qualified leads, pipeline velocity, revenue contribution, and durable demand capture in non-brand search. It includes tradeoffs, such as when a content cluster should maximize top-of-funnel reach versus when it should prioritize high-intent conversions. It emphasizes repeatable decision-making instead of ad hoc analysis that changes every month.

What SEO analytics is not

Many teams still treat analytics as a dashboard that updates weekly. That framing encourages metric shopping, where teams rotate KPIs until the report looks better. It also encourages over-reliance on rankings, even though modern SERPs can decouple rank from clicks because of features like snippets, local packs, shopping modules, and video placements. A mature program does not ignore ranking data, but it treats rankings as a directional input rather than a source of truth.

Google analytics seo work often fails when teams try to answer search questions using only session data. GA4 can tell a lot about on-site behavior and conversions, but it cannot see query-level impressions or SERP feature context, and it struggles with attribution and modeled conversions when consent constraints apply. A credible approach uses multiple systems, clearly assigns which system answers which question, and documents the limitations. That discipline prevents false confidence and makes the outputs useful for decisions.

SEO Analytics Framework: From Business Questions to Metrics

The measurement brief template

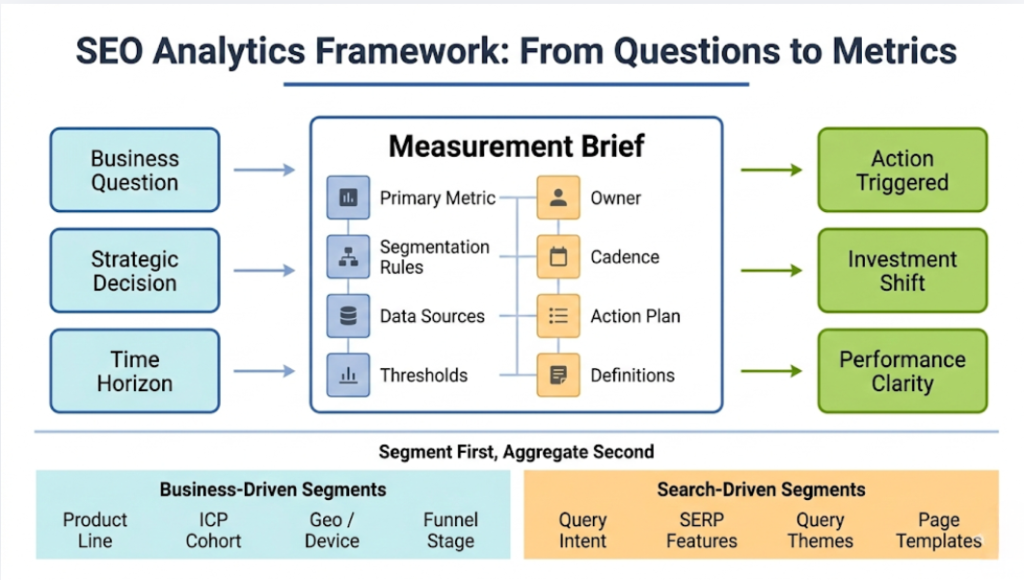

Teams that outperform rarely start by picking KPIs. They start with a measurement brief that forces clarity on what decision the metric will drive. The brief translates strategy into an operational contract: what gets measured, how it gets segmented, who owns it, and what action triggers when the metric moves. This is where seo analytics becomes a decision system rather than a reporting routine.

A practical measurement brief includes at least seven elements. It defines the business question, the decision that depends on the answer, the metric that best represents the question, and the segmentation rules that prevent the metric from hiding variance. It also assigns an owner, a cadence, and thresholds for action, such as when to escalate an issue or double down on an initiative. Without this brief, teams drift into “look at the numbers” meetings that produce no consistent actions.

Useful measurement brief fields include:

- Business question and time horizon (short diagnostic vs quarterly investment)

- Decision or tradeoff that the metric informs

- Primary metric and secondary context metrics

- Segmentation rules (brand vs non-brand, query intent class, device, market, page type)

- Data sources and known limitations

- Owner and next action playbook

- Thresholds for change, plus expected lag time

KPI governance and definitions

Governance sounds boring until a team has two dashboards with two definitions of “organic conversions.” Governance solves that problem by creating a shared definitions registry, channel grouping rules, and annotation discipline. It also standardizes the segmentation vocabulary, such as how “brand” gets defined, how query classes get labeled, and how templates map to business lines. When governance is absent, stakeholders lose trust and teams waste cycles debating definitions instead of doing work.

A definition registry should include the calculation, the scope, and the exception cases. For example, “organic pipeline” might mean opportunities created from a cohort whose first-touch source was organic search, measured over a 90-day window, excluding self-serve upgrades that bypass CRM. That definition needs to sit next to caveats about modeled conversions, cross-device identity, and offline conversion delays. Clear definitions also make seo and analytics reporting far more effective because reports stop changing meaning between months.

Segment-first measurement, not sitewide averages

Sitewide averages flatten the signal and make optimization guessy. The best teams segment first and aggregate second, because search behavior varies massively by intent, market, and page type. Brands and non-brands behave differently, and query classes behave differently even within non-brands. Mobile and desktop often show different SERP feature mixes, different CTR curves, and different conversion patterns. If a team does not segment, it risks “optimizing the average” while missing the segment that actually drives revenue.

A segmentation strategy should include both business-driven and search-driven dimensions. Business-driven dimensions include product line, service line, ICP cohort, geo, device, and funnel stage. Search-driven dimensions include query intent class, SERP feature presence, query themes, and page templates. When teams build this segmentation into the measurement plan, they can explain variance instead of simply observing it.

Data architecture and instrumentation for SEO analytics

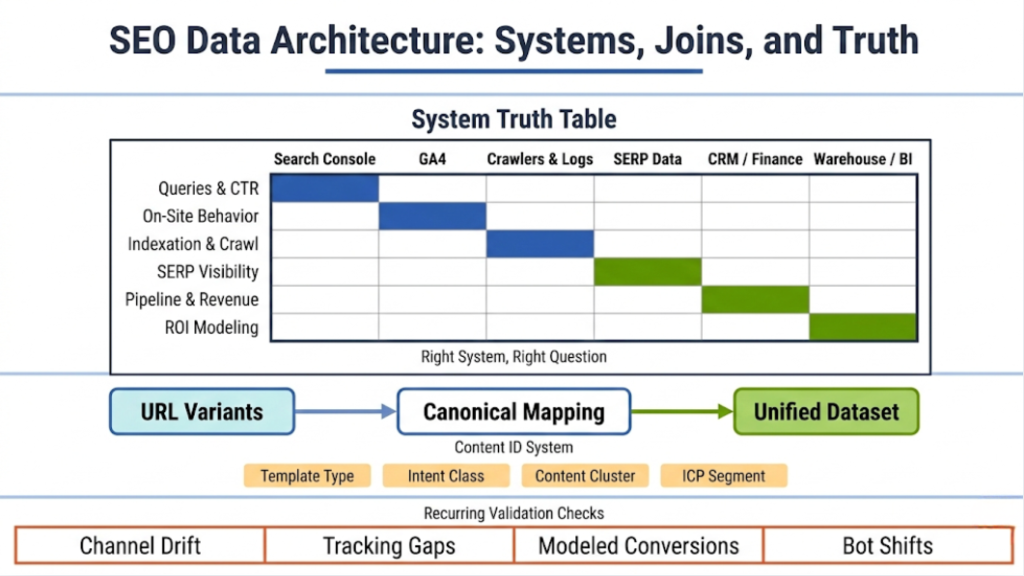

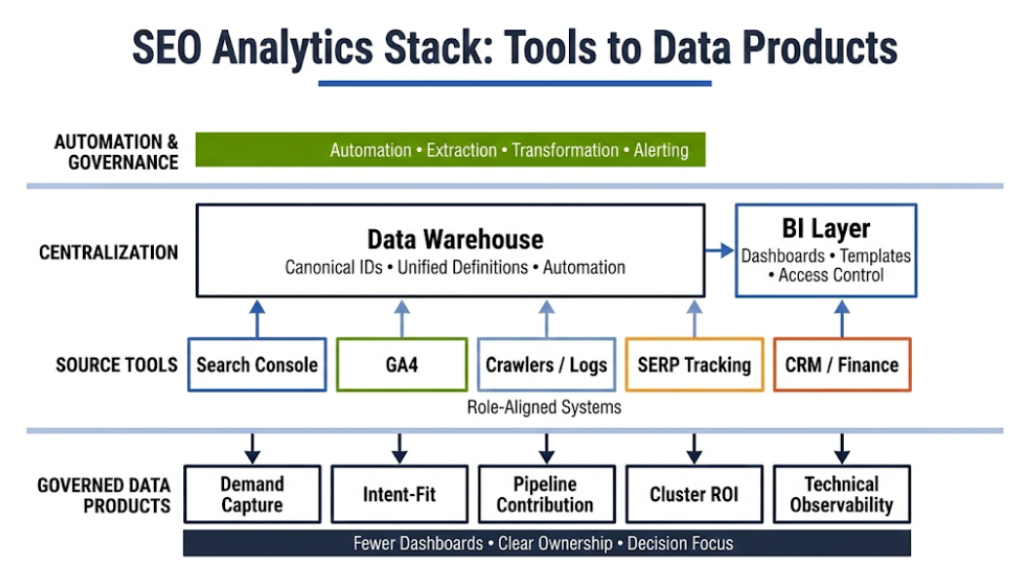

System roles and the “truth table”

A reliable stack assigns roles to systems instead of forcing one system to answer every question, mirroring how high-performing orgs define clear ownership across the SEO function. Search Console best answers query and page performance for impressions, clicks, CTR, and average position. GA4 best answers on-site behavior, cohort outcomes, event completion, and conversion performance. Crawlers and logs best answer indexation, crawl behavior, response codes, and internal linking structure. CRM and finance systems best answer pipeline, revenue, lead quality, and sales cycle dynamics.

This role assignment matters because it prevents category errors. For example, query-level CTR belongs to Search Console, not GA4. Revenue belongs to CRM or a warehouse, not a web analytics platform. Crawl efficiency belongs to logs, not a rank tracker. A truth table that documents these roles becomes the backbone of seo analytics operations and reduces internal debate.

A practical truth table often looks like this:

- Queries, impressions, clicks, CTR: Search Console

- Landing page behavior and conversions: GA4

- Indexation, crawling, technical health: crawl tools and logs

- Competitive visibility and SERP features: rank and SERP data providers

- Pipeline and revenue outcomes: CRM and finance

- Unified modeling, segmentation, and ROI: data warehouse and BI

Joining strategy and canonicalization rules

Multi-source analytics fails when teams cannot join data consistently. URLs change, parameters proliferate, canonicals vary, and tracking tags drift. A stable joining strategy starts with URL normalization, canonical mapping, and a content catalog that assigns stable IDs to pages, templates, and clusters. It also includes rules for handling faceted navigation, localization, pagination, and alternate formats.

Teams should decide whether the canonical URL or the rendered URL becomes the primary join key. Many programs treat canonical URL as the “content identity” and treat rendered URL variations as derivatives. That approach requires a pipeline that maps Search Console page URLs to canonical equivalents, then maps GA4 landing pages to the same canonical set. It also requires documenting exceptions, such as when localized pages should not collapse to a single canonical.

Instrumentation for expert-grade analysis

Instrumentation should support diagnosis and decision-making, not just reporting. In GA4, that means key events that represent meaningful progress, not an explosion of low-signal events. It also means content grouping that aligns with templates, clusters, or service lines, so teams can analyze performance at the right level. It means internal traffic filtering, referral exclusion discipline, cross-domain tracking where needed, and consistent conversion windows.

For SEO-specific analysis, instrumentation should also capture or derive attributes that matter for segmentation. Examples include page template type, content cluster, primary intent, and whether a page targets a specific ICP segment. This metadata can live in a content catalog in a warehouse and can join into analytics. Without metadata, analysts resort to regex rules that break every time the site changes.

Data quality failure modes to prevent

Data quality problems do not show up as errors. They show up as misleading conclusions that teams treat as truth. GA4 channel grouping drift can move traffic between channels without any change in acquisition. Cross-domain tracking gaps can cut sessions and inflate direct traffic. Consent constraints can increase modeled conversions and reduce deterministic attribution, which changes month-to-month comparisons.

Teams should implement a recurring set of checks that validate core assumptions. These checks should include monitoring for sudden changes in direct traffic, unusual spikes in referral traffic, landing page “(not set)” growth, and breakage in content grouping coverage. Logs should also be checked for shifts in bot crawl patterns after major releases. A small investment in these checks pays off because it protects the credibility of seo analytics outputs.

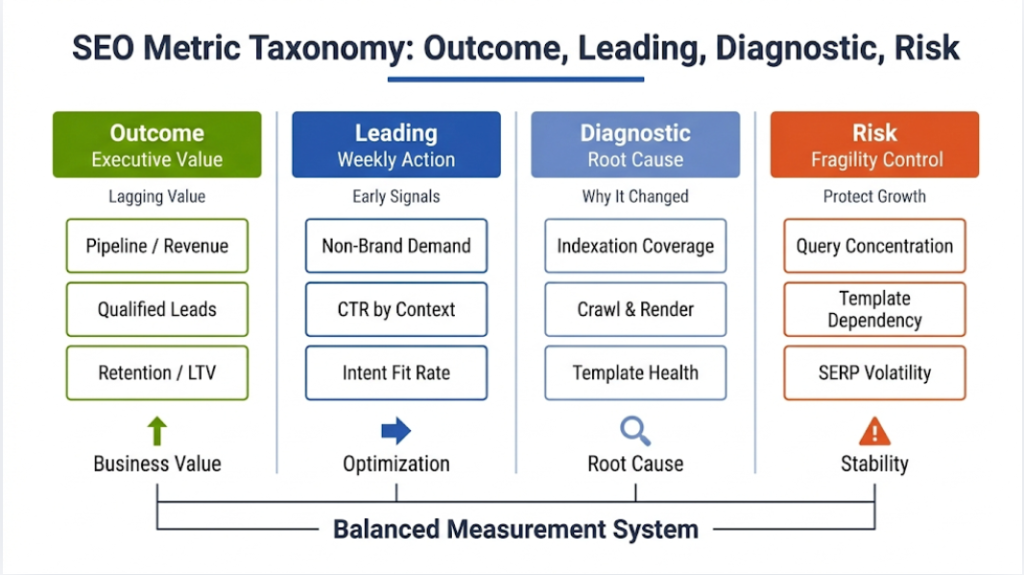

Metric taxonomy: Outcome, leading, diagnostic, and risk

Outcome metrics that leadership actually needs

Outcome metrics tie organic search to business value. For B2B, that typically means qualified leads, pipeline created, pipeline influenced, and revenue, with clear definitions and time windows. For B2C or self-serve, it can mean revenue, subscriptions, upgrades, and retention, again with definitions that match the business model. Outcome metrics should also reflect quality, not just volume, because low-quality leads create sales load and distort ROI.

Outcome metrics do not need to be perfect to be useful, but they need to be consistent and transparent about limitations. Teams should document whether they rely on first-touch, last-touch, or blended attribution, and how they handle offline conversions and identity. They should also account for sales-cycle lag, because SEO investments often show returns over months, not days. This is where a credible SEO ROI narrative starts to form.

Leading indicators that guide weekly action

Leading indicators move earlier than revenue and help teams diagnose and optimize in near real time. These include demand capture metrics such as non-brand impressions and clicks by query class, CTR by SERP feature context, and conversion-weighted visibility for high-intent query sets. They also include landing page intent-fit metrics, such as next-step rate and micro-conversion completion for organic cohorts. These indicators help teams decide whether to adjust content, snippets, internal linking, or UX.

A good leading indicator should meet two tests. It should correlate with downstream outcomes, and it should respond to actions the team can take. “Average position sitewide” rarely passes the second test because too many confounders affect it. In contrast, “CTR for high-impression queries where the site appears with no snippet enhancement” often passes because teams can change titles, structured data, and page intent alignment. Leading indicators should also be segmented, because improvements often occur in specific query classes or templates.

Diagnostic metrics for root cause analysis

Diagnostic metrics explain why something changed. These include indexation coverage by template, crawl frequency, response code distributions, proxy distributions for internal linking, and renderability checks for JS-heavy templates. They also include structured data validity, page speed, and stability measures, and content decay indicators. These metrics rarely serve as executive KPIs, but they drive the actions that restore or accelerate growth.

The best diagnostic systems behave like observability, not like a monthly audit. They produce alerts and annotated timelines that link changes to releases. They also link to remediation playbooks, so a team can respond quickly instead of running a new investigation each time. When teams treat diagnostics as ongoing observability, they reduce recovery time after regressions and protect the business outcomes.

Risk metrics that prevent fragile growth

Risk metrics warn teams about concentration and volatility, exactly the type of evaluation that becomes easier when you run a structured risk-and-opportunity SWOT. Query concentration risk measures how much performance depends on a small set of queries or pages. Template dependency risk measures how much performance depends on a single page type that could regress. Volatility exposure measures how sensitive performance is to SERP feature shifts, algorithm changes, or competitive moves in a category. These metrics often matter more in mature programs because the downside risk grows with scale.

Risk metrics can also guide investment. If a program relies heavily on a single cluster, it should diversify content and build authority across adjacent clusters. If a site depends on a single template, it should invest in template testing, monitoring, and rollout discipline. If a market shows high SERP feature density, it should prioritize SERP real estate strategies instead of chasing rank positions alone.

Metrics that matter: the core measurement set

Demand capture in organic search (Search Console first)

Demand capture begins with impressions and clicks, but the real value comes from how teams segment and interpret them. Search Console provides query and page data that support an intent-based model of demand. Teams should separate brand from non-brand, then split non-brand into intent classes such as informational, commercial investigation, and transactional. They should also segment by market and device because the same query can behave very differently on mobile versus desktop. According to research published by Google and Millward Brown Digital, 71% of B2B researchers begin their buying journey with a non-brand search, underscoring the importance of non-brand demand segmentation for SEO analytics maturity.

A mature analysis also accounts for SERP context. CTR changes often reflect changes in SERP feature density, competitor snippet updates, or shifts in query intent, not just title tag changes. Teams should track which queries show rising impressions but falling clicks, because that pattern often signals an increase in zero-click behavior, a new SERP feature, or a mismatch between snippet promise and page intent. This is where SEO analytics moves from “counting clicks” to diagnosing demand-capture efficiency. As stated in Search Engine Land, Google AI Overviews have driven a roughly 61% drop in organic CTR and a 68% decline in paid CTR on queries where the feature appears, highlighting how AI results suppress traditional clicks.

Teams should also carefully map queries to pages. Query-to-page mapping changes, site architecture, internal linking, and content updates can create cannibalization that hides growth potential. A useful practice is to monitor the number of landing pages that receive clicks for a given query set and flag sudden fragmentation. This helps identify when a new page competes with an existing high-performing page without improving total clicks.

SERP real estate ownership: presence, not just position

Modern SERPs reward presence across modules. A page can rank third but still win more clicks if it earns a snippet, rich result enhancements, or video visibility. Conversely, a page can rank first and still lose clicks if a local pack, shopping carousel, or AI summary absorbs attention above the fold. SERP real estate analytics measures this reality by tracking feature coverage and “above-the-fold presence” rather than relying on rank position alone.

A strong SERP real estate model defines a set of target features by category, then measures coverage by query class. It should track snippet eligibility factors such as structured data validity, content formatting, and media availability. It should also monitor competitor feature adoption, because feature capture becomes a moving target once rivals adopt rich results or change snippet strategy. This is a core upgrade over competitors that focus only on retiring misleading metrics without giving a full operational model for feature-based visibility.

A practical approach uses a query set that represents meaningful demand and aligns with commercial intent. Teams can then measure feature ownership rates and estimate the expected CTR lift from feature capture using historical data. The key is to keep the model honest and not pretend precision. If the model uses estimated CTR curves, it should be clear that the outputs represent directional opportunity, not guaranteed results.

Conversion-weighted visibility and why it beats generic visibility

Visibility metrics often fail because they treat all queries as equal. Professionals know that impressions for low-intent informational queries do not have the same value as visibility for high-intent commercial queries. Conversion-weighted visibility addresses that by weighting query groups by their observed or modeled conversion propensity. It ties upstream search presence to downstream outcomes in a way that supports investment decisions.

Teams can build conversion weights using multiple approaches. One approach uses historical landing page conversion rates by query class and maps query groups to those landing pages. Another approach uses a warehouse model that links query themes to assisted conversion paths, then assigns a conservative weight that reflects uncertainty. The most robust approach uses a blend that includes lead quality, because high conversion rates on low-quality leads can still degrade business outcomes. Conversion-weighted visibility fits naturally into seo analytics governance because it forces teams to define intent classes and conversion definitions clearly.

Teams should also set guardrails to avoid false precision. Weighting models can overfit and produce unstable results if the input dataset is small or noisy. A good practice includes confidence bands, smoothing windows, and minimum sample thresholds for applying weights. When weights do not meet quality thresholds, teams should revert to simpler segmentation views rather than outputting misleading scores.

Landing page intent-fit: behavioral indicators that predict outcomes

Landing page performance often reveals whether SEO content matches user intent. GA4 provides useful indicators like engaged sessions, engagement time, and key event completion, but expert teams go further. They define micro-conversions that reflect meaningful progress, such as pricing page views, demo request starts, product comparison interactions, or qualified content downloads. They also measure next-step rate, which captures whether users move deeper into the journey or exit after consuming the entry page.

Intent-fit analysis improves when teams group pages by template and cluster. A service page template behaves differently from an editorial template, and a category template behaves differently from a product template. Comparing these page types directly produces misleading conclusions. Instead, teams compare within template classes and within query intent classes, then identify outliers that indicate mismatched messaging, weak information scent, or UX friction.

A practical intent-fit workflow pairs Search Console query insights with GA4 behavior. If a query set shows strong impressions and weak CTR, the issue likely sits in snippet promise, SERP features, or competition. If CTR is strong but on-page progression is weak, the issue likely sits in intent mismatch, content structure, or UX patterns that interrupt momentum. This is where how to improve seo with Google Analytics becomes a disciplined practice rather than a list of tips.

Qualified conversion rate: integrating lead quality

Organic conversions do not equal business value when lead quality varies. Professionals need a quality-adjusted view, especially in B2B or high-consideration funnels. Qualified conversion rate links organic cohorts to downstream CRM stages such as MQL, SQL, opportunity created, and closed-won. It also accounts for the fact that SEO can generate both research-driven leads and purchase-ready leads, and those behave differently across the funnel.

A strong model creates cohorts at the time of acquisition, then tracks stage progression over time. That approach avoids the mistake of attributing late-stage outcomes to the wrong time period. It also supports lag curves that show the expected time from organic acquisition to opportunity creation, which helps set realistic expectations for content programs. Teams can then interpret short-term traffic or lead changes in the context of expected pipeline timing.

Quality integration also requires disciplined definitions. If MQL criteria change, the analytics must note the change or the trend line becomes unusable. If territories or routing rules change, lead-to-opportunity rates can shift independently of SEO. A mature seo analytics program treats these changes as first-class context, not as footnotes, and uses annotations and governance to keep trend interpretation stable.

Revenue and pipeline contribution: credible attribution in practice

Attribution is not incrementality, but professionals still need attribution views to manage channel performance and diagnose funnel contributions. Organic search often plays a mixed role, including first-touch discovery, mid-funnel evaluation, and late-stage validation. A credible approach includes multiple lenses: first-touch for acquisition, last-touch for conversion context, and assisted or path-based views for influence. Teams should present these lenses together, with consistent definitions and an explanation of what each lens can and cannot prove.

A warehouse model improves credibility because it can join CRM outcomes to acquisition cohorts and to content metadata. It can also incorporate offline conversions, which matters when deals close in CRM rather than on the website. For organizations with long sales cycles, pipeline contribution often provides more stable signals than revenue in the short term. Revenue attribution becomes more useful over longer windows, where seasonal patterns and lag can be modeled more effectively.

Teams should also resist the temptation to “optimize for attribution.” If a metric incentivizes pages to capture the last click, it can distort content strategy and reduce overall demand generation. Mature teams use attribution to understand contribution and bottlenecks, not as a leaderboard that drives misaligned behavior. This is a key foundation for a defensible SEO ROI narrative.

Content efficiency and topic cluster ROI

Topic cluster ROI moves beyond page-level reporting and focuses on program-level efficiency. It asks whether a cluster generates incremental demand capture and downstream outcomes relative to the cost to create and maintain it. Costs include writing, design, subject matter involvement, engineering support, and ongoing updates for freshness and accuracy. Returns include not only conversions but also leading indicators like impression share growth in target query classes and improved conversion-weighted visibility.

A robust cluster ROI model accounts for content decay. Many clusters show strong initial growth followed by plateau or decline as competitors update content or as intent shifts. Measuring a “freshness half-life” by cluster helps teams plan refresh cycles and allocate budget to maintenance versus net-new content. It also helps detect when a cluster saturates, meaning additional content adds minimal incremental value and may even cause cannibalization.

Cannibalization analysis matters here. Clusters can unintentionally split relevance across multiple pages that target the same theme, which reduces overall performance. Teams should monitor query-to-page dispersion and identify cases where multiple pages compete for the same high-value queries. Cluster ROI should also incorporate an internal linking strategy, because clusters often depend on a strong hub-to-spoke architecture that guides both crawlers and users.

Technical health metrics that correlate with growth

Technical SEO metrics become meaningful when they connect to outcomes and to diagnosed mechanisms. Indexation coverage by template can reveal whether a new template rollout created crawlability or canonical issues. Crawl efficiency from logs can show whether bots waste time on parameters or thin faceted pages. Structured data validity can explain shifts in rich result coverage and CTR. Performance metrics like Core Web Vitals can affect both user behavior and conversions, especially for mobile-heavy segments.

A strong technical health framework splits metrics into prevention and diagnosis. Prevention metrics include automated checks for robots directives, sitemap integrity, canonical tags, and rendering. Diagnosis metrics include template-level indexation and crawl distribution, response code patterns, and internal link distribution proxies. When a regression hits, teams should already have baseline distributions and alerting in place, so they can isolate the change quickly.

Technical health also needs prioritization rules. Not every warning matters, and teams waste time when they chase low-impact fixes. Professionals should evaluate fixes based on affected traffic segments, affected templates, and expected impact on leading indicators like CTR and on-page progression. This is where seo analytics turns technical work into measurable business protection rather than a backlog of audits.

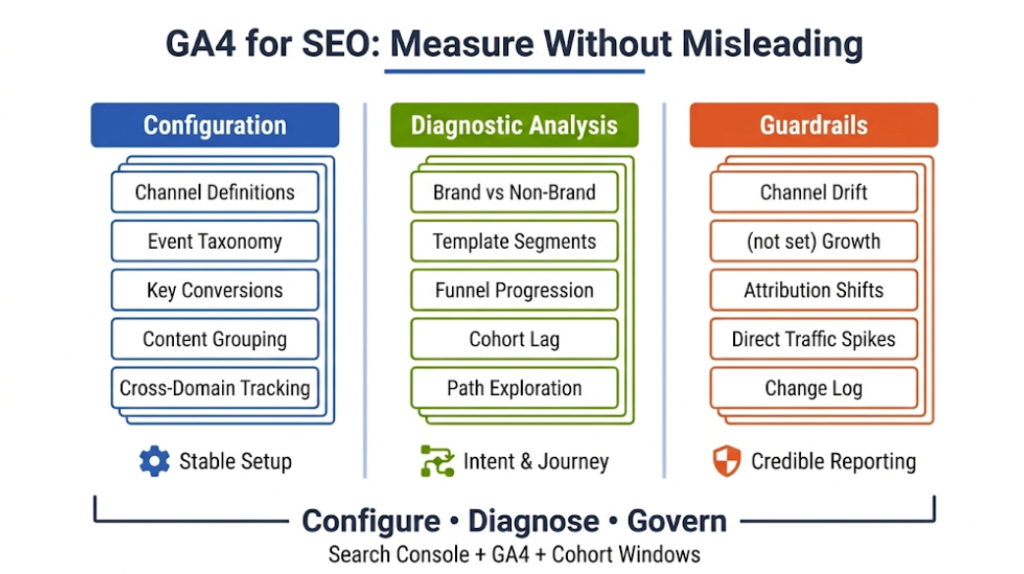

How to measure SEO in Google Analytics (GA4) without fooling anyone

GA4 configuration for seo analytics

Professionals often ask how to measure seo in Google Analytics because GA4 remains the primary behavioral and conversion measurement platform. However, Google Analytics seo analysis only becomes reliable when configuration, segmentation, and governance are disciplined. Without clear channel definitions, key events, and content grouping, GA4 produces confident but misleading conclusions.

GA4 can support high-quality Google Analytics seo analysis, but only if teams configure it to avoid scope errors and channel ambiguity. Teams should start with a disciplined event taxonomy that maps to funnel progression, then mark key events that truly represent business value. They should also configure internal traffic filters, referral exclusions, and cross-domain tracking where needed so that acquisition data remains stable.

Content grouping provides another essential capability. GA4 analyses become far more useful when teams can slice landing pages by template, product line, or cluster rather than by raw URL. That grouping can come from GTM, from page metadata, or from a warehouse-enriched dataset. Teams should also document attribution settings and conversion windows and should keep them stable across reporting periods to preserve comparability.

A practical GA4 checklist for SEO measurement includes:

- Stable channel grouping definitions for Organic Search

- Key events aligned to funnel stages, not vanity interactions

- Content groupings for template, cluster, and business line

- Cross-domain and subdomain tracking correctness

- Regular audits for “(not set)” growth and sudden direct traffic shifts

Analyses that actually diagnose SEO performance

GA4 reports should not stop at “organic sessions and conversions.” Professionals need analyses that reveal intent-fit, funnel leakage, and segment-specific bottlenecks. A strong starting point is an organic landing page analysis segmented by brand versus non-brand and by template class. That helps identify whether issues cluster around specific page types, such as service pages that attract research intent but fail to move users forward. It also highlights outlier pages that convert unusually well, which can inform content and UX patterns.

Funnel exploration becomes valuable when it mirrors the real journey. For example, an organic cohort might enter on an informational page, navigate to a comparison page, then reach pricing, then request a demo. Teams should define micro-conversions that represent these steps and measure progression rates between them. Path exploration can then show which content paths produce the highest quality conversions, which informs internal linking and recommended content modules.

Cohort analyses also matter for SEO because organic traffic often includes repeat visits and multi-session journeys. Teams can build cohorts based on first user source and then examine conversion behavior over 30, 60, or 90 days. That approach often reveals that organic acquisition produces high-value users who convert later, which single-session metrics can understate. When teams combine these analyses with Search Console demand capture data, they can identify whether the problem sits in the SERP or on-site.

Common GA4 pitfalls and how to avoid them

GA4 pitfalls tend to create confident but wrong conclusions. Attribution modeling can change outcomes as modeled conversions shift, especially under consent constraints. Session scope and user scope can also confuse analyses when teams mix metrics that do not align. Channel misclassification can inflate direct traffic and undercount organic, which then distorts ROI calculations. Even small configuration changes can break month-to-month comparisons if teams do not annotate and govern them.

Teams should build guardrails into reporting. They should validate that organic landing page totals match expectations, monitor the distribution of source and medium values, and check for sudden shifts in channel composition. They should also keep a change log that records GA4 configuration changes, tag manager changes, and major site releases. This governance supports credible seo and analytics reporting because it gives stakeholders the context they need to interpret changes correctly.

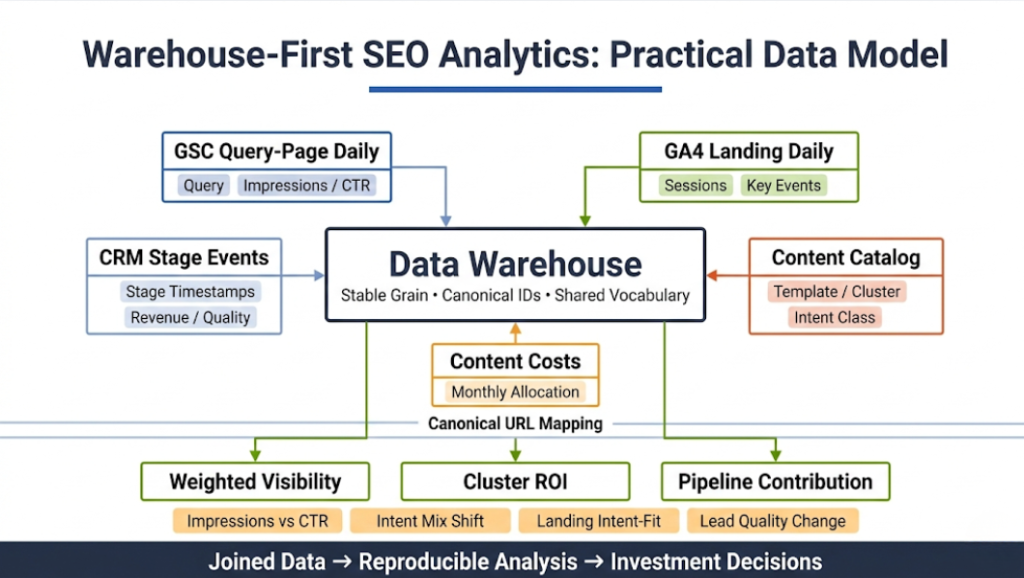

Warehouse-first SEO analytics: a practical data model

Why a warehouse model changes the ceiling

Many teams can get to “good” with native tools, but the ceiling arrives quickly when the questions become cross-system and ROI-focused. A warehouse model allows consistent joins between Search Console, GA4, crawl/log data, and CRM outcomes. It allows stable segmentation with a single content catalog and a single vocabulary. It also supports reproducible analysis, which matters when multiple analysts and stakeholders rely on the outputs.

A warehouse model also makes it possible to compute metrics that native tools cannot. Examples include conversion-weighted visibility by query class, lag-adjusted pipeline contribution by landing page cohort, and cost-adjusted cluster ROI. These outputs often become the differentiators that make an article on seo analytics stand out, because they move beyond generic advice and show implementable structures.

Recommended tables, grain, and joining keys

A practical model keeps grains consistent and documents what each table represents. Search Console data often lives at a daily granularity by query and page. GA4 landing page data often lives at a daily level by landing page, source and medium, and key events. CRM stage events often live at an event grain per lead or opportunity. Costs might live at a monthly rate by cluster or initiative. A content catalog provides stable IDs and metadata that join across all of them.

A common table set includes:

- gsc_query_page_daily with query, page, date, impressions, clicks, CTR, position

- ga4_landingpage_daily with canonical landing page, date, sessions, engaged sessions, key events, conversions, revenue proxies

- content_catalog with canonical URL, template, cluster, intent class, business line, publish date, last updated date

- crm_stage_events with lead or account IDs, acquisition cohort, stage timestamps, revenue, and quality fields

- costs_content_ops with initiative or cluster cost allocations by month

Joining rules should prioritize canonical URL mapping and stable content IDs. They should also include logic for localization and parameters. Teams should document exceptions, such as when multiple URLs represent materially different content even if canonicals collapse them. This clarity prevents “quiet join loss” where data disappears because a mapping rule fails silently.

Outputs that matter for decision-making

Once the model exists, teams can build outputs that directly support decisions. Conversion-weighted visibility by query class can tell whether the program grows in high-intent demand or only in low-intent reach. Cluster ROI outputs can tell which topic programs deserve more investment and which need refresh or consolidation. Pipeline contribution outputs can show where organic traffic produces the highest quality outcomes and the expected time-to-value for new content. These outputs also make seo and analytics reporting more actionable because the report can recommend changes based on stable models.

Teams should also build variance explanations into the outputs. For example, if non-brand clicks drop, the model should show whether impressions fell, CTR fell, or query mix shifted. If conversions drop, the model should show whether landing page intent-fit declined, whether key events fell, or whether lead quality changed. This is how an analytics seo report becomes a decision memo rather than a static chart pack.

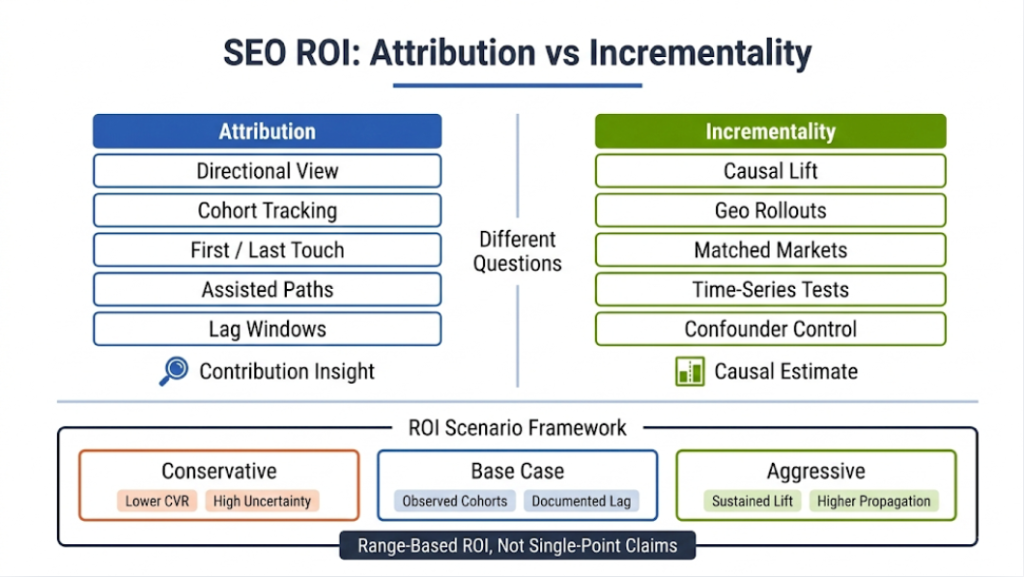

SEO ROI that finance will sign off on: attribution versus incrementality

Why SEO ROI remains hard and how to make it credible

Professionals talk about SEO ROI constantly, but many ROI claims fail scrutiny because they treat attribution as causality. SEO affects demand capture over time, often through multi-touch journeys, and it interacts with brand, paid media, and product changes. Privacy constraints and identity limitations further reduce observability. A credible approach acknowledges these limits, uses conservative assumptions, and presents ranges rather than single-point certainty.

Credible ROI starts with clean definitions of cost and return. Costs should include content, creative, engineering, tooling, and maintenance, not only writing hours. Returns should focus on quality-adjusted outcomes such as qualified pipeline or revenue, not only form fills. ROI should also include lag assumptions that reflect the business cycle, because SEO value often accrues over months. This is where warehouse cohorting becomes essential.

Practical methods to estimate ROI without overclaiming

Attribution provides a directional view, while incrementality attempts to estimate causal lift. Most organizations can start with attribution, then layer incrementality methods for major initiatives. A conservative attribution approach might use first-touch organic cohorts and track the pipeline created over a defined window, while also tracking assisted contribution for context. Incrementality methods might include geo-based rollouts, matched market comparisons, or time-series interruption analysis around major changes.

A practical ROI framework can include three scenarios:

- Conservative: assumes lower conversion rates and higher attribution uncertainty

- Base case: uses observed cohort conversion behavior with documented lag

- Aggressive: assumes sustained lift and higher conversion propagation

Teams should communicate these scenarios clearly and link them to measurable inputs. They should also specify confidence drivers such as sample size, stability of lead quality, and the presence of confounding changes like pricing updates or product launches. This transparency increases trust and makes ROI useful for budget decisions.

Monitoring and anomaly detection: SEO analytics as operations

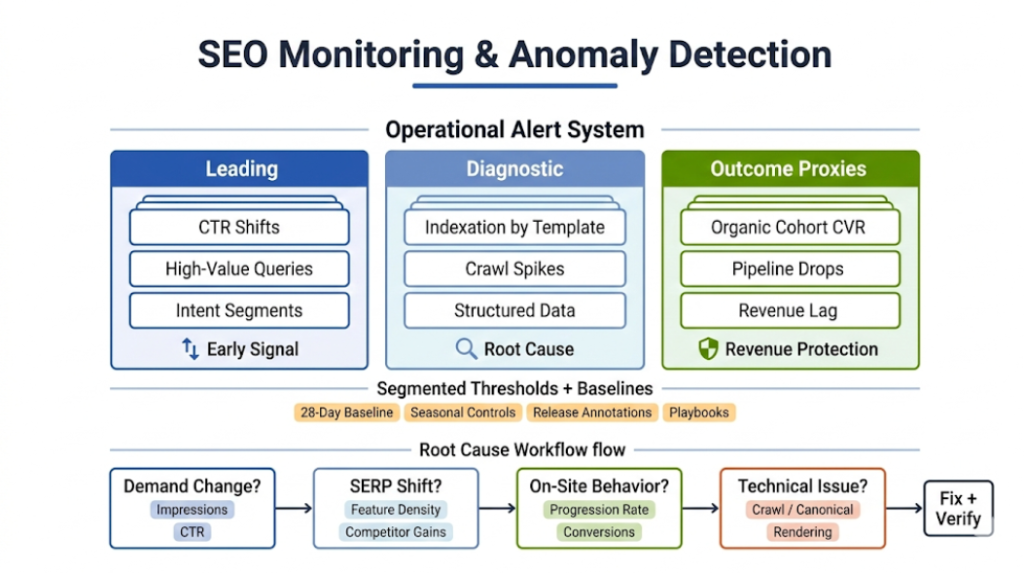

Alert taxonomy and why it prevents revenue surprises

High-performing teams treat SEO as an operational system with monitoring. They define alerts for leading indicators, diagnostics, and outcome proxies so that regressions do not linger unnoticed. Alerts should cover indexation changes by template, sudden CTR shifts in high-value query groups, crawl spikes into parameter spaces, and conversion drops in organic cohorts. These alerts work best when they tie to specific segments and templates rather than sitewide totals.

Alerting also needs thresholds and playbooks. A “drop” should not mean a normal seasonal dip, and a “spike” should not mean an expected campaign effect. Teams can use baselines such as trailing 28-day averages with seasonal controls where appropriate. They can also annotate known changes like releases, migrations, or content launches to reduce false positives. This discipline makes seo analytics faster and more reliable.

Root cause workflow: from symptom to fix

When an alert triggers, teams need a repeatable root cause workflow. The workflow should start by isolating whether the change occurred in demand, in SERP presentation, on-site behavior, or tracking. Search Console can reveal whether impressions fell or CTR fell, and it can highlight query groups that drove the change. SERP data can reveal whether features shifted or competitors gained enhanced results. GA4 can reveal whether landing page progression or conversion behavior changed.

A strong workflow also checks technical diagnostics quickly. Logs can show whether crawlers reduced frequency on key templates or increased crawling on non-value URLs. Crawls can reveal canonical, robots, or rendering issues introduced by releases. Structured data testing can confirm whether rich result eligibility broke. Once the team isolates the likely cause, the playbook should specify the fix path and the verification metrics to confirm recovery.

SEO and analytics reporting: from dashboards to decision memos

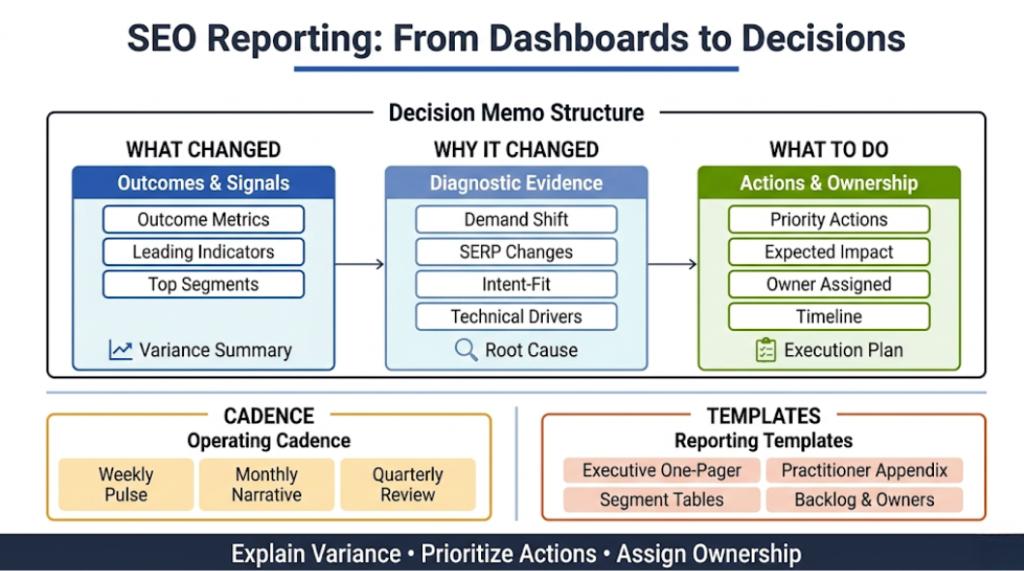

Reporting principles that create action

Professionals often ask for seo and analytics reporting, but the report itself matters less than the decisions it drives. A strong report explains variance, prioritizes actions, and assigns ownership. It does not simply restate KPIs. It also uses consistent segmentation, consistent definitions, and a clear narrative structure. This makes stakeholders trust the report and makes teams move faster.

A reliable reporting narrative includes three layers. First, it summarizes outcomes and leading indicators with the segments that changed the most. Second, it explains why those changes occurred using diagnostic evidence. Third, it recommends actions, with expected impact, owners, and timelines. This structure turns an analytics seo report into an operating rhythm that aligns stakeholders.

Cadences and templates that scale across teams

A practical cadence includes a weekly diagnostic pulse, a monthly performance narrative, and a quarterly investment review. The weekly pulse focuses on anomalies, leading indicators, and near-term fixes. The monthly narrative focuses on segment trends, initiative performance, and content and technical learnings. The quarterly review focuses on ROI, budget allocation, and strategic shifts, including topic cluster expansion or consolidation.

Two templates often work well. An executive one-pager should include outcomes, drivers, risks, and decisions needed. A practitioner appendix should include segmented tables, query group changes, experiment results, and a backlog with owners. These templates also allow agencies and in-house teams to collaborate without drowning stakeholders in raw data. They keep the report human, direct, and actionable.

SEO analytics tools: stack design and selection criteria

What to expect from seo analytics tools

Professionals evaluate seo analytics tools based on coverage, data access, and workflow integration. Search Console and GA4 remain foundational, but most teams layer crawlers, log analysis, rank and SERP feature tracking, and BI tooling. The selection should align to the truth table defined earlier, so each tool supports specific questions. Tools should not overlap unnecessarily, and teams should prioritize exportability and governance.

A strong stack also supports automation. Automated extraction and transformation reduce manual reporting and free analysts to interpret and recommend. BI tools can standardize dashboards and templates, while a warehouse can unify joins and definitions. This architecture enables decision-centric reporting and supports ROI modeling that native tools struggle to provide.

When to centralize in BI and a warehouse

Centralization becomes valuable when questions cross systems and when stakeholders require consistent definitions. If a team needs pipeline contribution by query class, it needs a warehouse join between Search Console, GA4, and CRM, plus a content catalog. If a team needs cost-adjusted cluster ROI, it needs cost data and content metadata. If a team needs anomaly detection across search, site, and CRM outcomes, it needs unified baselines and alerting logic.

Centralization also reduces dashboard sprawl. Instead of building dozens of tool-specific reports, teams can build a small set of governed data products: demand capture, intent-fit, pipeline contribution, cluster ROI, and technical observability. These products become the backbone of seo analytics operations and reduce the cognitive load on stakeholders.

How to improve SEO with Google Analytics: analytics-to-action playbooks

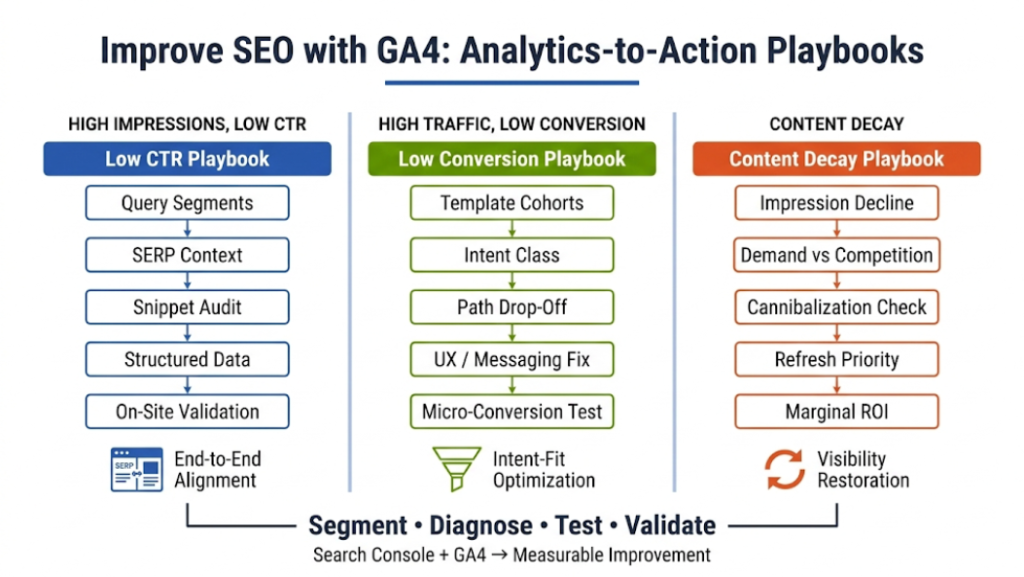

Playbook 1: High impressions, low CTR, then fix snippet and SERP presence

When Search Console shows high impressions but weak clicks, GA4 alone cannot solve the problem. Teams should first segment the query set by intent and SERP context and identify where CTR underperforms relative to historical baselines. Then they should audit snippet promise, title, and description alignment, and structured data validity that supports rich results, grounded in on-page improvements that directly influence SERP outcomes. They should also review competitor snippets to understand where the market sets expectations.

Teams should then validate on-site alignment, because CTR improvements can backfire if the page does not satisfy the query. GA4 can reveal whether users bounce quickly, fail to progress, or do not complete micro-conversions after landing. This combined approach creates a disciplined method for how to improve seo with Google Analytics without pretending GA4 can see the SERP. It turns CTR work into an end-to-end experience improvement.

Playbook 2: High traffic, low conversion, then fix intent-fit and funnel progression

If CTR looks healthy and traffic arrives, conversion issues usually sit on-site. Teams should segment by landing page template and intent class and look for pages with strong engagement but weak next-step rates. They should analyze paths to see where users drop off, then map those drop-offs to content gaps, UX friction, or misaligned calls to action. They should also examine whether the landing page attracts research intent while the conversion offer targets purchase intent.

Teams can then test changes systematically. They can adjust information architecture, strengthen internal linking to evaluative content, improve page layout, and align messaging to intent. They should measure results with micro-conversions and cohort conversion behavior over time, not only immediate form fills. This approach ties Google Analytics seo analysis to concrete improvements that affect outcomes.

Playbook 3: Content decay, then refresh based on marginal value

Content decay analysis identifies pages or clusters whose impressions and clicks decline over time. Teams should separate decay caused by demand shifts from decay caused by competitive updates or outdated content. They can use Search Console to identify query groups that lost impressions or clicks, then use SERP review and content audits to identify why. They should also consider whether cannibalization emerged due to new pages that split relevance.

Refresh prioritization should focus on marginal value. Teams should estimate which updates likely restore high-intent demand capture and which updates only add incremental informational reach. GA4 can help identify whether decayed pages still drive meaningful progression when traffic arrives, which indicates that the content still converts and needs visibility restoration. This method makes refresh work defensible and aligned to ROI.

Where creative changes the numbers: turning insights into execution

Measuring creative and messaging effects with SEO analytics

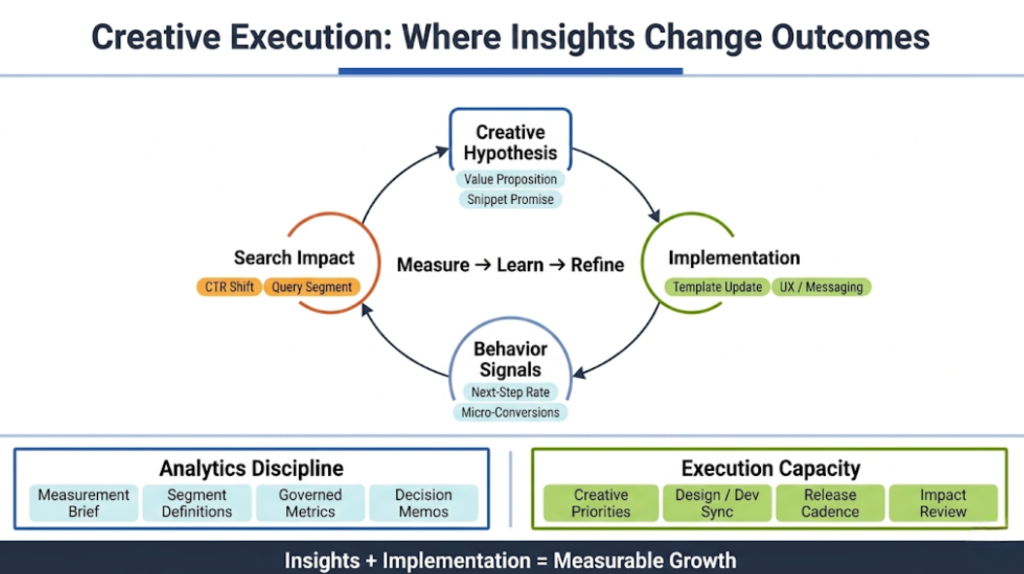

Creative changes influence SEO outcomes more than many teams admit. Messaging affects CTR through snippet promise and brand resonance. Visual hierarchy and information scent affect progression rates and conversion behavior. Content structure affects both user comprehension and eligibility for certain rich results. These effects can be measured when teams tie creative hypotheses to specific segments and metrics.

A practical method starts with a creative hypothesis such as “clarifying the primary value proposition in the first screen will increase next-step rate for non-brand commercial traffic.” The team then implements the change on a defined set of pages or templates and measures micro-conversions, progression rates, and cohort conversion outcomes. It also monitors Search Console CTR changes for relevant query groups, because snippet alignment often improves when on-page messaging becomes clearer. This loop connects creative work to measurable outcomes without reducing creative to generic A/B testing.

A light agency operating model that avoids over-promotion

When a creative agency supports SEO analytics execution, the value often comes from operational cadence. The team can turn analytics insights into prioritized creative and UX tasks, implement changes with design and development coordination, and then measure impact using governed definitions. This partnership works best when it respects governance, maintains a shared measurement brief, and uses decision-centric reporting that aligns stakeholders.

The important point is that execution capacity and measurement discipline must move together. Analytics without implementation becomes commentary. Implementation without measurement becomes guesswork. A well-run operating model ties both sides into the same rhythm, so each release becomes a learning opportunity that improves future decisions.

Frequently Asked Questions

1. How does SEO analytics differ from traditional digital analytics?

Traditional digital analytics focuses on traffic, sessions, and conversions at the channel level. SEO analytics integrates query data, SERP context, indexation, crawl behavior, and CRM outcomes into a unified decision system. It emphasizes intent segmentation, demand capture efficiency, and revenue contribution rather than surface-level traffic growth.

2. How should SEO analytics adapt to zero-click search and AI answers?

Teams should expand measurement beyond clicks and track visibility, branded search lift, assisted conversions, and cohort behavior. Query-level impression trends combined with downstream engagement help reveal whether AI surfaces influence demand indirectly. Attribution alone is not sufficient in these environments.

3. What is the right balance between automation and analyst judgment?

Automation should handle data collection, transformation, and alerting. Analysts should focus on segmentation, hypothesis testing, and strategic interpretation. Dashboards surface patterns, but expert judgment explains variance and defines action.

4. How can organizations align SEO analytics with executive reporting?

Executives need business outcomes, not traffic charts. Reporting should connect organic performance to pipeline, revenue, and investment decisions, supported by transparent assumptions. Presenting SEO ROI as a range with documented inputs improves credibility.

5. How often should an SEO analytics framework be reviewed?

Core definitions and segmentation should be reviewed at least twice per year, or after major structural changes such as migrations or CRM updates. Monthly validation of tracking and channel definitions prevents silent data drift.

6. How should global or multi-language sites approach SEO analytics?

Segment by market and language while maintaining centralized governance. Conversion benchmarks and intent differ across regions, so aggregation can hide insight. Localization requires careful canonical mapping and regional performance analysis.

7. What role does predictive modeling play in SEO analytics?

Predictive modeling supports forecasting and prioritization by estimating potential demand capture and conversion lift. Forecasts should include confidence ranges and sensitivity analysis rather than fixed projections.

8. How can SEO analytics support product-led growth?

Organic cohorts should be tracked through activation, feature adoption, and retention milestones. Connecting acquisition data to product analytics reveals whether organic traffic drives durable growth rather than short-term conversions.

9. How should technical SEO fixes be prioritized?

Prioritize issues based on affected traffic segments, commercial intent, and expected revenue impact. Combine impact estimation with implementation effort to guide resource allocation objectively.

10. Can SEO analytics measure brand influence?

Brand influence can be approximated through growth in branded queries, returning users, and assisted conversions. Cohort behavior often reveals long-term brand impact even when direct attribution remains limited.

11. How should SEO analytics integrate with paid search?

Analyze query overlap and marginal return across channels. Integrated reporting prevents internal competition and supports more efficient allocation of search budgets.

12. What emerging metrics should professionals watch?

Professionals should monitor AI surface visibility, conversational query patterns, and cohort-based incremental lift. As privacy constraints increase, modeled and cohort-based measurement will become more important than deterministic tracking.

To Conclude: the maturity checklist and the next 90 days

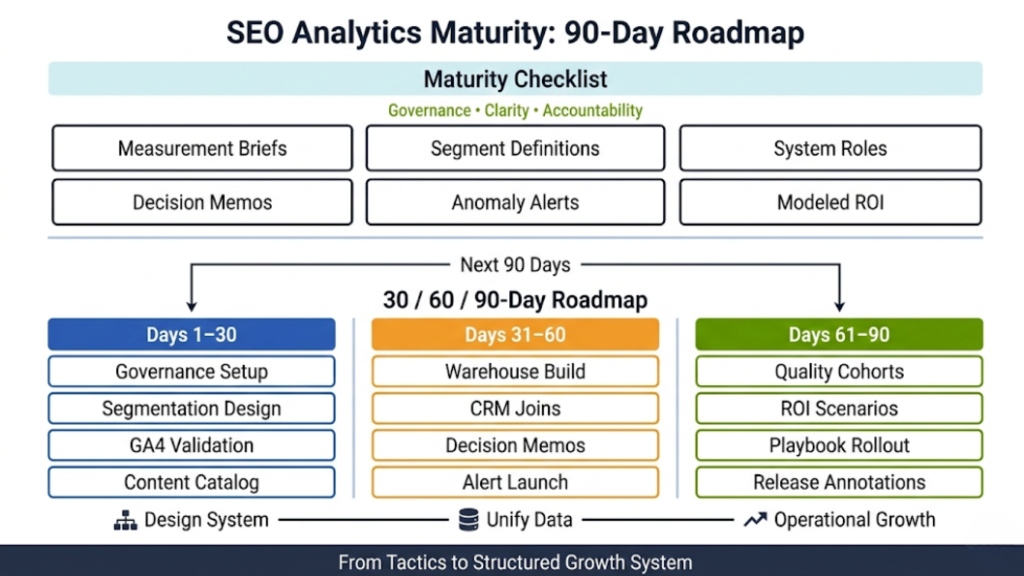

A mature SEO analytics program operates with governance, clarity, and accountability. It uses documented measurement briefs, clear segmentation and metric definitions, and defined system roles across Search Console, GA4, crawl data, and CRM. Reporting centers on decision memos, not dashboards, and explains variance with evidence. It monitors anomalies with structured alerting and communicates SEO ROI as modeled ranges that account for lag, lead quality, attribution, and incrementality.

30/60/90-Day Plan

- Days 1–30: Establish governance and measurement design. Create measurement briefs, define segmentation and metrics, validate GA4 organic tracking, assign system roles, and build a structured content catalog.

- Days 31–60: Build the warehouse model and decision reporting. Create unified tables, connect organic data to CRM stages, launch decision memos, and implement anomaly alerts.

- Days 61–90: Implement ROI modeling and scalable playbooks. Deploy quality-adjusted cohorts, build ROI scenarios, apply incrementality when needed, operationalize CTR and intent-fit playbooks, and refine monitoring with release annotations.

By day 90, SEO analytics functions as a structured growth system rather than a collection of tactics.

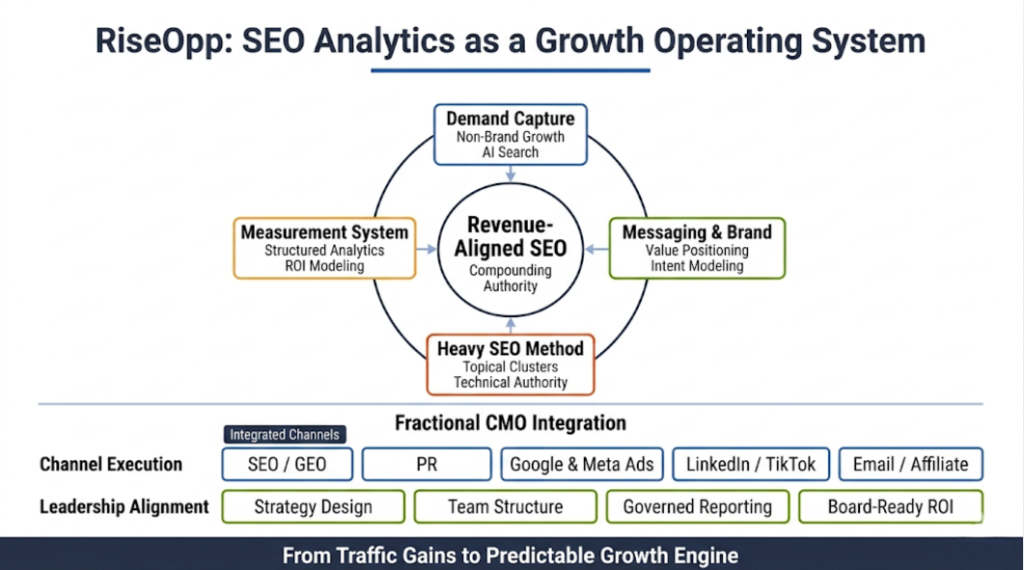

About RiseOpp

At RiseOpp, we treat seo analytics as the operating system behind growth, not as a reporting layer that sits on top of campaigns. As a leading Fractional CMO and SEO services company, we work with both B2B and B2C organizations to design and execute marketing strategies that perform in an AI-shaped search environment. Our perspective combines executive-level strategy with hands-on channel execution, which allows us to connect demand capture, messaging, and revenue outcomes in a single framework.

Our proprietary Heavy SEO methodology focuses on building durable search authority over time and ranking for tens of thousands of strategically aligned keywords. That process goes beyond content production. It integrates technical architecture, topical clustering, SERP real estate strategy, intent modeling, and rigorous measurement. We use structured seo analytics frameworks to validate what works, identify what needs to evolve, and prioritize investments that compound rather than spike.

Because RiseOpp operates as a Fractional CMO partner, we connect SEO performance directly to broader marketing and business strategy. That includes branding and messaging refinement, marketing team hiring and structuring, and execution across SEO, GEO, PR, Google Ads, Meta Ads, LinkedIn Ads, TikTok Ads, email marketing, and affiliate marketing. We align every channel with measurable outcomes and build reporting systems that leadership can trust, including defensible SEO ROI narratives that stand up in executive and board-level discussions.

If your organization needs more than incremental traffic gains and instead requires a structured, revenue-aligned SEO analytics system, we would welcome the conversation. Reach out to RiseOpp to discuss how our Fractional CMO model and Heavy SEO methodology can help you build scalable demand capture and turn organic search into a predictable growth engine.

Comments are closed